《機器學習_07_02_svm_軟間隔支援向量機》

一.簡介

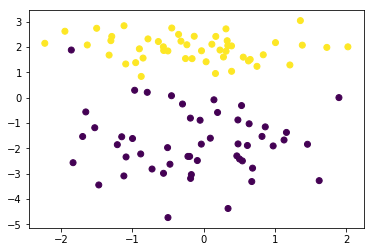

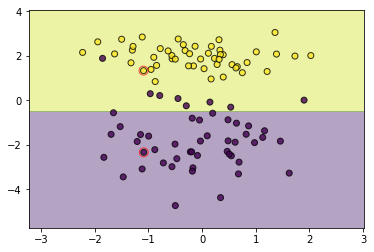

上一節介紹了硬間隔支援向量機,它可以在嚴格線性可分的數據集上工作的很好,但對於非嚴格線性可分的情況往往就表現很差了,比如:

import numpy as np

import matplotlib.pyplot as plt

import copy

import random

import os

os.chdir('../')

from ml_models import utils

from ml_models.svm import HardMarginSVM

%matplotlib inline

*** PS:請多試幾次,生成含雜訊點的數據***

from sklearn.datasets import make_classification

data, target = make_classification(n_samples=100, n_features=2, n_classes=2, n_informative=1, n_redundant=0,

n_repeated=0, n_clusters_per_class=1, class_sep=2.0)

plt.scatter(data[:,0],data[:,1],c=target)

<matplotlib.collections.PathCollection at 0x202a6f55a58>

#訓練

svm = HardMarginSVM()

svm.fit(data, target)

utils.plot_decision_function(data, target, svm, svm.support_vectors)

那怕僅含有一個異常點,對硬間隔支援向量機的訓練影響就很大,我們希望它能具有一定的包容能力,容忍哪些放錯的點,但又不能容忍過度,我們可以引入變數\(\xi\)和一個超參\(C\)來進行控制,原始的優化問題更新為如下:

s.t.y_i(w^Tx_i+b)\geq 1-\xi_i,i=1,2,…,N\\

\xi_i\geq0,i=1,2,…,N

\]

這裡\(C\)若越大,包容能力就越小,當取值很大時,就等價於硬間隔支援向量機,而\(\xi\)使得支援向量的間隔可以調整,不必像硬間隔那樣,嚴格等於1

Lagrange函數

關於原問題的Lagrange函數:

s.t.\mu_i\geq 0,\alpha_i\geq0,i=1,2,…,N

\]

二.對偶問題

對偶問題的求解過程我就省略了,與硬間隔類似,我這裡就直接寫最終結果:

s.t.\sum_{i=1}^N\alpha_iy_i=0,\\

0\leq\alpha_i\leq C,i=1,2,…,N

\]

可以發現與硬間隔的不同是\(\alpha\)加了一個上界的約束\(C\)

三.KKT條件

這裡就直接寫KKT條件看原優化變數與拉格朗日乘子之間的關係:

\frac{\partial L}{\partial b}=0\Rightarrow \alpha_i^*y_i=0(關係2)\\

\frac{\partial L}{\partial \xi}=0\Rightarrow C-\alpha_i^*-\mu_i^*=0(關係3)\\

\alpha_i^*(1-\xi_i^*-y_i({w^*}^Tx_i+b^*))=0(關係4)\\

\mu_i^*\xi_i^*=0(關係5)\\

y_i({w^*}^Tx_i+b^*)-1-\xi_i^*\geq0(關係6)\\

\xi_i^*\geq0(關係7)\\

\alpha_i^*\geq0(關係8)\\

\mu_i^*\geq0(關係9)\\

\]

四.\(w^*,b^*\)的求解

由KKT條件中的關係1,我們可以知道:

\]

對於\(b^*\)的求解,我們可以取某點,其\(0<\alpha_k^*<C\),由關係3,4,5可以推得到:\({w^*}^Tx_k+b^*=y_k\),所以:

\]

五.SMO求\(\alpha^*\)

好了,最終模型得求解落到了對\(\alpha^*\)得求解上,求解過程與硬間隔一樣,無非就是就是對\(\alpha\)多加了一個約束:\(\alpha_i^*<=C\),具體而言需要對\(\alpha_2^{new}\)的求解進行更新:

當\(y_1\neq y_2\)時:

H=min(C,C+\alpha_2^{old}-\alpha_1^{old})

\]

當\(y_1=y_2\)時:

H=min(C,\alpha_2^{old}+\alpha_1^{old})

\]

更新公式:

H & \alpha_2^{unc}> H\\

\alpha_2^{unc} & L \leq \alpha_2^{unc} \leq H\\

L & \alpha_2^{unc}<L

\end{matrix}\right.

\]

六.程式碼實現

"""

軟間隔支援向量機的smo實現,放到ml_models.svm模組中

"""

class SoftMarginSVM(object):

def __init__(self, epochs=100, C=1.0):

self.w = None

self.b = None

self.alpha = None

self.E = None

self.epochs = epochs

self.C = C

# 記錄支援向量

self.support_vectors = None

def init_params(self, X, y):

"""

:param X: (n_samples,n_features)

:param y: (n_samples,) y_i\in\{0,1\}

:return:

"""

n_samples, n_features = X.shape

self.w = np.zeros(n_features)

self.b = .0

self.alpha = np.zeros(n_samples)

self.E = np.zeros(n_samples)

# 初始化E

for i in range(0, n_samples):

self.E[i] = np.dot(self.w, X[i, :]) + self.b - y[i]

def _select_j(self, best_i):

"""

選擇j

:param best_i:

:return:

"""

valid_j_list = [i for i in range(0, len(self.alpha)) if self.alpha[i] > 0 and i != best_i]

best_j = -1

# 優先選擇使得|E_i-E_j|最大的j

if len(valid_j_list) > 0:

max_e = 0

for j in valid_j_list:

current_e = np.abs(self.E[best_i] - self.E[j])

if current_e > max_e:

best_j = j

max_e = current_e

else:

# 隨機選擇

l = list(range(len(self.alpha)))

seq = l[: best_i] + l[best_i + 1:]

best_j = random.choice(seq)

return best_j

def _meet_kkt(self, w, b, x_i, y_i, alpha_i):

"""

判斷是否滿足KKT條件

:param w:

:param b:

:param x_i:

:param y_i:

:return:

"""

if alpha_i < self.C:

return y_i * (np.dot(w, x_i) + b) >= 1

else:

return y_i * (np.dot(w, x_i) + b) <= 1

def fit(self, X, y2, show_train_process=False):

"""

:param X:

:param y2:

:param show_train_process: 顯示訓練過程

:return:

"""

y = copy.deepcopy(y2)

y[y == 0] = -1

# 初始化參數

self.init_params(X, y)

for _ in range(0, self.epochs):

if_all_match_kkt = True

for i in range(0, len(self.alpha)):

x_i = X[i, :]

y_i = y[i]

alpha_i_old = self.alpha[i]

E_i_old = self.E[i]

# 外層循環:選擇違反KKT條件的點i

if not self._meet_kkt(self.w, self.b, x_i, y_i, alpha_i_old):

if_all_match_kkt = False

# 內層循環,選擇使|Ei-Ej|最大的點j

best_j = self._select_j(i)

alpha_j_old = self.alpha[best_j]

x_j = X[best_j, :]

y_j = y[best_j]

E_j_old = self.E[best_j]

# 進行更新

# 1.首先獲取無裁剪的最優alpha_2

eta = np.dot(x_i - x_j, x_i - x_j)

# 如果x_i和x_j很接近,則跳過

if eta < 1e-3:

continue

alpha_j_unc = alpha_j_old + y_j * (E_i_old - E_j_old) / eta

# 2.裁剪並得到new alpha_2

if y_i == y_j:

L = max(0., alpha_i_old + alpha_j_old - self.C)

H = min(self.C, alpha_i_old + alpha_j_old)

else:

L = max(0, alpha_j_old - alpha_i_old)

H = min(self.C, self.C + alpha_j_old - alpha_i_old)

if alpha_j_unc < L:

alpha_j_new = L

elif alpha_j_unc > H:

alpha_j_new = H

else:

alpha_j_new = alpha_j_unc

# 如果變化不夠大則跳過

if np.abs(alpha_j_new - alpha_j_old) < 1e-5:

continue

# 3.得到alpha_1_new

alpha_i_new = alpha_i_old + y_i * y_j * (alpha_j_old - alpha_j_new)

# 4.更新w

self.w = self.w + (alpha_i_new - alpha_i_old) * y_i * x_i + (alpha_j_new - alpha_j_old) * y_j * x_j

# 5.更新alpha_1,alpha_2

self.alpha[i] = alpha_i_new

self.alpha[best_j] = alpha_j_new

# 6.更新b

b_i_new = y_i - np.dot(self.w, x_i)

b_j_new = y_j - np.dot(self.w, x_j)

if self.C > alpha_i_new > 0:

self.b = b_i_new

elif self.C > alpha_j_new > 0:

self.b = b_j_new

else:

self.b = (b_i_new + b_j_new) / 2.0

# 7.更新E

for k in range(0, len(self.E)):

self.E[k] = np.dot(self.w, X[k, :]) + self.b - y[k]

# 顯示訓練過程

if show_train_process is True:

utils.plot_decision_function(X, y2, self, [i, best_j])

utils.plt.pause(0.1)

utils.plt.clf()

# 如果所有的點都滿足KKT條件,則中止

if if_all_match_kkt is True:

break

# 計算支援向量

self.support_vectors = np.where(self.alpha > 1e-3)[0]

# 顯示最終結果

if show_train_process is True:

utils.plot_decision_function(X, y2, self, self.support_vectors)

utils.plt.show()

def get_params(self):

"""

輸出原始的係數

:return: w

"""

return self.w, self.b

def predict_proba(self, x):

"""

:param x:ndarray格式數據: m x n

:return: m x 1

"""

return utils.sigmoid(x.dot(self.w) + self.b)

def predict(self, x):

"""

:param x:ndarray格式數據: m x n

:return: m x 1

"""

proba = self.predict_proba(x)

return (proba >= 0.5).astype(int)

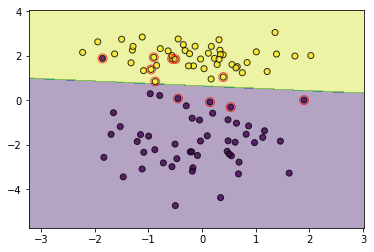

svm = SoftMarginSVM(C=3.0)

svm.fit(data, target)

utils.plot_decision_function(data, target, svm, svm.support_vectors)

通過控制C可以調節寬容度,設置一個大的C可以取得和硬間隔一樣的效果

svm = SoftMarginSVM(C=1000000)

svm.fit(data, target)

utils.plot_decision_function(data, target, svm, svm.support_vectors)

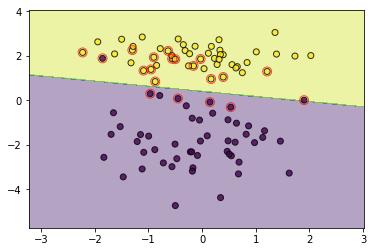

有時,太過寬容也不一定好

svm = SoftMarginSVM(C=0.01)

svm.fit(data, target)

utils.plot_decision_function(data, target, svm, svm.support_vectors)

七.支援向量

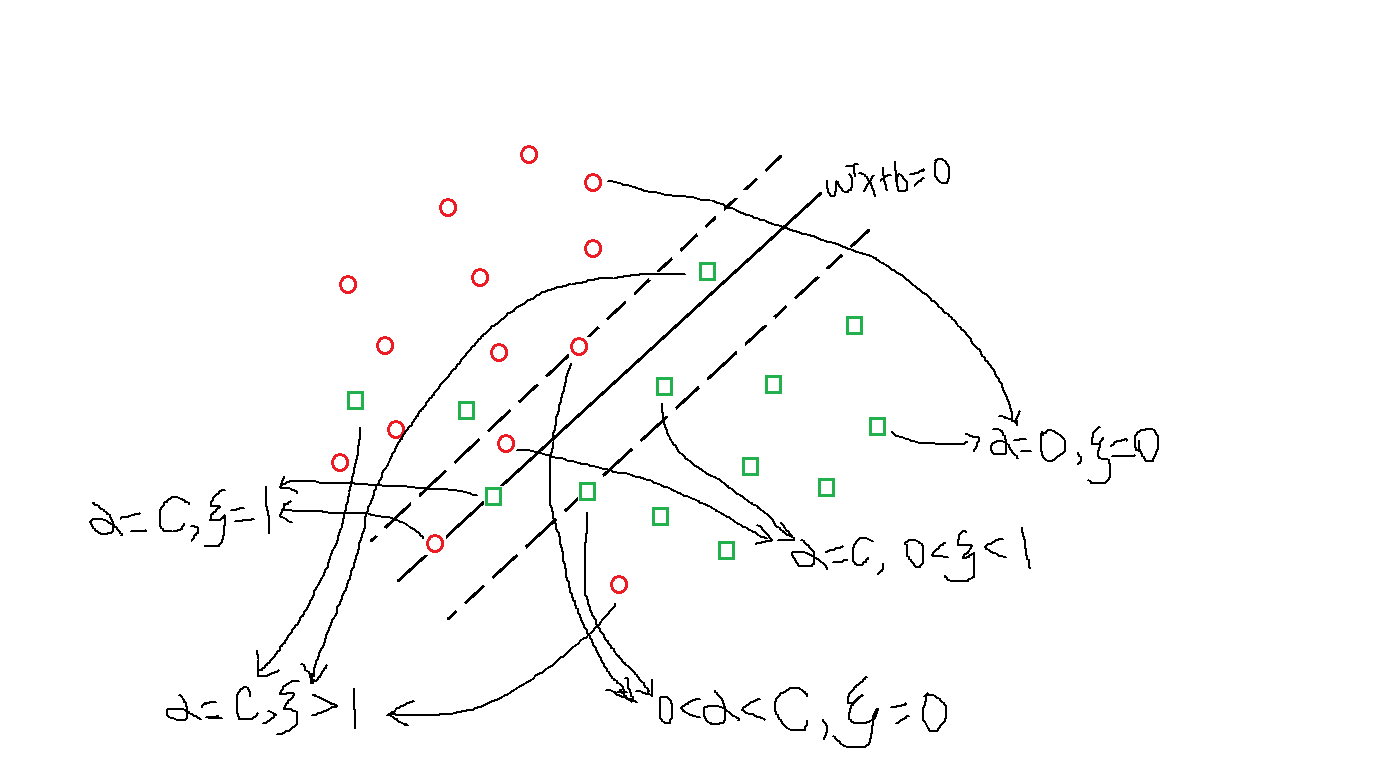

軟間隔支援向量機的支援向量複雜一些,因為對於\(\alpha>0\)有許多種情況,如下圖所示,大概可以分為4類:

(1)\(0<\alpha_i<C,\xi_i=0\):位於間隔邊界上;

(2)\(\alpha_i=C,0<\xi_i<1\):分類正確,位於間隔邊界與分離超平面之間;

(3)\(\alpha_i=C,\xi_i=1\):位於分離超平面上;

(4)\(\alpha_i=C,\xi_i>1\):位於錯誤分類的一側