【線上測試之後的應用】基於MySQL+MHA+Haproxy構建高可用負載均衡資料庫集群(詳解)

- 2020 年 3 月 8 日

- 筆記

這裡我們先介紹一下MHA是什麼,其次就是它的應用與測試,同時為了大家呈現了數據備份案例,最後總結了使用情況以及注意事項和解決辦法

一、MHA 概述

MHA(Master High Availability)是可以在MySQL上使用的一套高可用方案、所編寫的語言為Perl

從名字上我們可以看到、MHA的目的就是為了維護Master的高可用、也是是一套相對比較成熟的MySQL高可用解決方案

在MySQL故障進行切換的時候、MHA可做到10-30秒之間自動完成故障切換工作、選擇一個最優的從庫作為新的Master

在這個故障切換的同時、MHA也可以在最大程度上保證數據的一致性、以此來達到資料庫的高可用性和數據的一致性

MHA在故障切換的過程中、會試圖從異常的Master保存binlog、以此來確保數據不丟失

這裡存在一個問題、如果Master無法通過SSH訪問了、那麼MHA也就沒辦法保存binlog了、故障確實轉移了、新的Master也確實選舉出來了但是有些數據也丟失了

所以使用MySQL的半同步複製就可以降低數據丟失的風險、MHA可以與半同步結合、如果只有一個Slave收到了最新的binlog、那麼MHA可將最新的binlog應用到其它的節點、以此來保證數據的一致性

部署MHA最少需要三台節點、一主兩從、其中一台為Master、另外兩個從作為備選Master、在不出於成本考慮的情況下、生產環境最好是一主三從、因為一台Master出現異常還有兩台Slave節點可讀

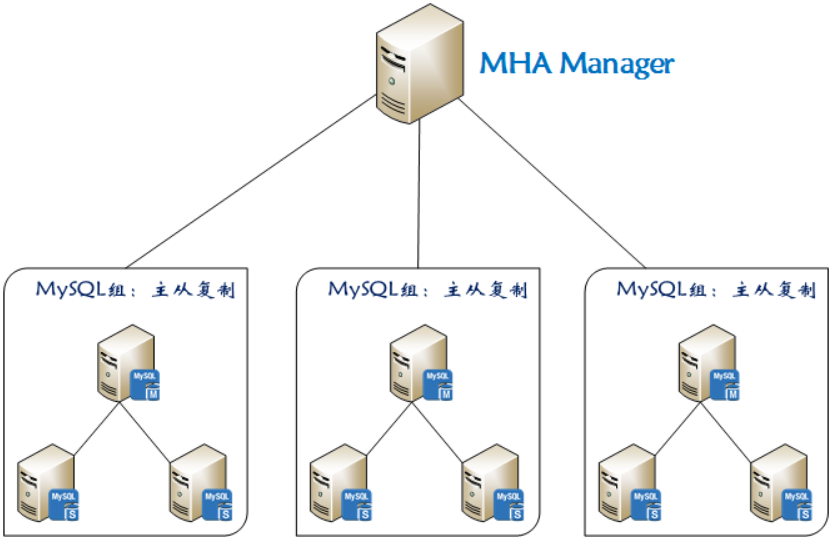

1)MHA 組成結構

MHA的組成分為兩部分、分別為:管理節點(MHA Manager)與 數據節點(MHA Node)

MHA-Manager:可部署在單個節點上、它會定時去檢測集群中的節點、當發現Master故障它就會自動將擁有最新數據的Slave提升為新的Master、然後將其它所有Slave指向新的Master MHA-Node: 需要部署在每台MySQL節點上、MHA-Node具備中繼日誌清理、中繼日誌對比、binlog數據保存等功能

2)MHA 工作原理

MHA兩大核心功能:Master宕機後自動切換到新的Master、故障切換的時候保證整個MySQL集群的數據丟失儘可能最小

1、如果Master出現了異常、MHA則嘗試從異常的Master上保存binlog文件 2、查找最新更新的Slave、因為這台Slave具有用於恢復的所有中繼日誌 3、查找到以後選擇它作為新的Master、並應用中繼日誌到其它的Slave節點、同時也會激活虛擬IP 4、應用成功以後、最後讓其它的Slave節點連接最新的Master並進行數據複製

3)MHA 常用工具

1、MHA-Manager 所帶工具

masterha_manger #啟動MHA masterha_stop #停止MHA masterha_check_ssh #檢查SSH遠程情況 masterha_check_repl #檢查主從複製情況 masterha_check_status #檢測MHA運行狀態 masterha_master_monitor #檢測master是否宕機 masterha_master_switch #手動進行故障切換 masterha_secondary_check #多網線路檢查Master節點 masterha_conf_host #添加或刪除配置的server資訊

2、MHA-Node 所帶工具

purge_relay_logs #清除無用的中繼日誌 save_binary_logs #保存宕機master的binlog數據 filter_mysqlbinlog #過濾掉不必要的ROLLBACK事件 apply_diff_relay_logs #對比中繼日誌的差異、並將差異的事件應用至其它的slave

4)MHA 配置文件詳解

[server default] #默認區域、也是全局範圍、如果MySQL節點中有相同的地方、則參數可定義於此、比如binlog位置相同 manager_workdir = /etc/mha/conf #MHA的工作目錄、裡面存放著配置文件、日誌文件、腳本等 manager_log = /etc/mha/logs/ms1.log #定義MHA日誌所存儲的目錄 remote_workdir = /opt #故障轉移發生之後、MHA則會在MySQL節點上保存binlog文件、而這個目錄就是存儲binlog文件的、默認為/var/tmp report_script = /etc/mha/script/send_report #故障轉移發生之後、如果需要查看故障轉移報告、則可調用此腳本、但裡面有些參數還需調整 shutdown_script = /etc/mha/script/power_manager #設置Master出現故障之後關機、用於防止腦裂 master_ip_failover_script = /etc/mha/script/ms1_ip_failover #定義Master出現故障之後的自動轉移腳本、裡面有些參數還需調整 secondary_check_script = /usr/local/bin/masterha_secondary_check -s 10.2.3.12 -s 10.2.3.13 #默認為單線路檢查、即Manager to Master、此項為定義多線路檢查Master狀態、如果Manager到Master之間的監控出現問題、則Manager嘗試從10.2.3.12或10.2.3.13登錄到Master user = mha #定義MHA管理用戶、這個用戶需要在所有MySQL節點中存在 password = abc-123 #定義MHA管理用戶的密碼 repl_user = slave #定義MySQL主從複製用戶、這個用戶也需要在所有MySQL節點中存在 repl_password = abc-123 #定義主從複製用戶的密碼 ping_type = connect #定義MHA的檢查類型、默認為select、從0.56開始也引入了insert類型、這裡我定義為connect ping_interval = 3 #定義檢查間隔、默認為3秒 [server1] #本地區域、在這裡所定義的只針對在這個區域的MySQL節點生效 ssh_user = root #定義MySQL節點上的SSH用戶、如果所有MySQL節點的遠程用戶一樣、則可將其寫入默認區域 ssh_port = 22 #定義SSH的埠、如果所有MySQL節點的遠程埠一樣、則可將其寫入默認區域 hostname = 10.2.3.11 #定義MySQL節點的IP地址 port = 3635 #定義MySQL埠 master_binlog_dir = /usr/local/mysql/binlog #定義MySQL存儲binlog的位置、如果所有MySQL節點存儲binlog的位置相同、則可將其寫入默認區域 [server2] ssh_user = root ssh_port = 22 hostname = 10.2.3.12 port = 3635 candidate_master = 1 #設置候選Master、即發生故障轉移之後將此節點提升為新的Master、即使這個節點不是集群中事件最新的Slave check_repl_delay = 0 #觸發故障切換後在選擇新的Master時將會忽略複製延遲、可以與 candidate_master = 1 配合使用、說的通俗一點就是故障轉移之後、下一個Master一定是我 master_binlog_dir = /usr/local/mysql/binlog [server3] ssh_user = root ssh_port = 22 hostname = 10.2.3.13 port = 3635 master_binlog_dir = /usr/local/mysql/binlog [server3] ssh_user = root ssh_port = 22 hostname = 10.2.3.14 port = 3635 no_master = 1 #如果此項等於1、則相當於設置了這個MySQL節點永遠不會成為Master、這對於不同SSH埠的MySQL節點非常有效、下面我會說到 master_binlog_dir = /usr/local/mysql/binlog

二、MySQL+MHA+Haproxy 部署

1)整體架構

2)伺服器資訊

| 主機 | 地址 | 系統 | 角色 | 埠 |

| node1 | 10.2.3.11 | CentOS-7.5 | Master | 3635 |

| node2 | 10.2.3.12 | CentOS-7.5 | Slave-1 | 3635 |

| node3 | 10.2.3.13 | CentOS-7.5 | Slave-2 | 3635 |

| node4 | 10.2.3.14 | CentOS-7.5 | Slave-3 | 3635 |

| node5 | 10.2.3.15 | CentOS-7.5 | Manager | Null |

| node6 | 10.2.3.16 | CentOS-7.5 | Proxy | 9999 |

3)安裝MySQL

1、下載軟體包

[root@node1 ~]# wget -c https://downloads.mysql.com/archives/get/file/mysql-5.7.27.tar.gz [root@node1 ~]# wget -c https://nchc.dl.sourceforge.net/project/boost/boost/1.59.0/boost_1_59_0.tar.gz

2、安裝相應的依賴包

[root@node1 ~]# yum -y install ncurses ncurses-devel cmake libaio libaio-devel pcre pcre-devel zlib zlib-devel bison bison-devel libverto libverto-devel libstdc++ libstdc++-devel dbus dbus-devel libss libss-devel gcc gcc-c++ autoconf m4 libgcc e2fsprogs perl-Data-Dumper

3、為MySQL創建運行用戶與組

[root@node1 ~]# groupadd mysql [root@node1 ~]# useradd -M -s /sbin/nologin mysql -g mysql

4、解壓boost工具、並移動到指定位置即可、無需編譯安裝

[root@node1 ~]# tar xf boost_1_59_0.tar.gz [root@node1 ~]# mv boost_1_59_0 /usr/local/boost

5、編譯安裝MySQL

[root@node1 ~]# tar xf mysql-5.7.27.tar.gz -C /usr/src/ [root@node1 ~]# cd /usr/src/mysql-5.7.27/ [root@node1 mysql-5.7.27]# cmake -DCMAKE_INSTALL_PREFIX=/usr/local/mysql -DMYSQL_DATADIR=/usr/local/mysql/data -DSYSCONFDIR=/etc -DDEFAULT_CHARSET=utf8 -DDEFAULT_COLLATION=utf8_general_ci -DWITH_EXTRA_CHARSETS=all -DENABLED_LOCAL_INFILE=ON -DWITH_DEBUG=0 -DWITH_BOOST=/usr/local/boost -DWITH_FEDERATED_STORAGE_ENGINE=1 -DMYSQL_UNIX_ADDR=/usr/local/mysql/tmp/mysql.sock make -j 8 && make install -j 8 && cd ~

6、編譯安裝完成以後、創建對應的目錄、並調整相應的參數

[root@node1 ~]# mkdir /usr/local/mysql/pid [root@node1 ~]# mkdir /usr/local/mysql/logs [root@node1 ~]# mkdir /usr/local/mysql/socket [root@node1 ~]# mkdir /usr/local/mysql/tmp [root@node1 ~]# mkdir /usr/local/mysql/ibtmp [root@node1 ~]# mkdir /usr/local/mysql/binlog [root@node1 ~]# mkdir /usr/local/mysql/relaylog [root@node1 ~]# mkdir /usr/local/mysql/outcsv/ [root@node1 ~]# mkdir /usr/local/mysql/ibdata [root@node1 ~]# mkdir /usr/local/mysql/undolog [root@node1 ~]# mkdir /usr/local/mysql/redolog [root@node1 ~]# chown -R mysql:mysql /usr/local/mysql [root@node1 ~]# chmod -R 750 /usr/local/mysql/outcsv [root@node1 ~]# echo 'export PATH=/usr/local/mysql/bin:$PATH' >> /etc/profile [root@node1 ~]# source /etc/profile

7、定義MySQL配置文件

[root@node1 ~]# cat > /etc/my.cnf << EOF [client] port = 3635 socket = /usr/local/mysql/socket/mysql.sock [mysqld] user = mysql port = 3635 federated skip_ssl bind_address = 0.0.0.0 max_connections = 3600 max_connect_errors = 200 autocommit = ON skip-name-resolve symbolic-links = 0 skip-external-locking log_timestamps = system explicit_defaults_for_timestamp = ON transaction_isolation = read-committed binlog_gtid_simple_recovery = ON show_compatibility_56 = ON transaction_write_set_extraction = OFF socket = /usr/local/mysql/socket/mysql.sock pid-file = /usr/local/mysql/pid/mysql.pid log-error = /usr/local/mysql/logs/mysql_error.log secure-file-priv = /usr/local/mysql/outcsv innodb_tmpdir = /usr/local/mysql/ibtmp basedir = /usr/local/mysql datadir = /usr/local/mysql/data tmpdir = /usr/local/mysql/tmp character-set-server = utf8 init_connect = SET NAMES utf8 collation-server = utf8_general_ci slow_query_log = ON long_query_time = 1 min_examined_row_limit = 960 log_slow_admin_statements = ON log_slow_slave_statements = ON log_queries_not_using_indexes = OFF slow_query_log_file = /usr/local/mysql/logs/mysql_slow.log back_log = 360 tmp_table_size = 64M max_allowed_packet = 64M max_heap_table_size = 64M sort_buffer_size = 1M join_buffer_size = 1M read_buffer_size = 2M read_rnd_buffer_size = 2M thread_cache_size = 64 thread_stack = 256K query_cache_size = 32M query_cache_limit = 2M query_cache_min_res_unit = 2K table_open_cache = 4096 open_files_limit = 65535 connect_timeout = 9 interactive_timeout = 21600 wait_timeout = 21600 innodb_data_file_path = ibdata1:12M;ibdata:12M:autoextend innodb_autoextend_increment = 12 innodb_data_home_dir = /usr/local/mysql/ibdata innodb_undo_tablespaces = 4 innodb_undo_logs = 128 innodb_max_undo_log_size = 1G innodb_undo_log_truncate = ON innodb_purge_rseg_truncate_frequency = 10 innodb_undo_directory = /usr/local/mysql/undolog innodb_log_file_size = 128M innodb_log_buffer_size = 16M innodb_log_files_in_group = 3 innodb_flush_log_at_trx_commit = 2 innodb_flush_log_at_timeout = 1 innodb_flush_method = O_DIRECT innodb_log_group_home_dir = /usr/local/mysql/redolog innodb_temp_data_file_path = ibtmp1:12M:autoextend:max:5G innodb_fast_shutdown = 0 default-storage-engine = InnoDB innodb_buffer_pool_size = 2G table_open_cache_instances = 8 innodb_buffer_pool_chunk_size = 256MB innodb_page_size = 16k innodb_sort_buffer_size = 1MB innodb_file_per_table = ON innodb_large_prefix = ON innodb_purge_threads = 8 innodb_page_cleaners = 8 innodb_read_io_threads = 8 innodb_write_io_threads = 8 innodb_thread_concurrency = 16 innodb_flush_neighbors = 0 innodb_lru_scan_depth = 1024 innodb_lock_wait_timeout = 60 innodb_print_all_deadlocks = ON innodb_deadlock_detect = ON innodb_strict_mode = ON innodb_buffer_pool_load_at_startup = ON innodb_buffer_pool_dump_at_shutdown = ON EOF

8、對MySQL進行初始化

[root@node1 ~]# /usr/local/mysql/bin/mysqld --initialize-insecure --user=mysql --basedir=/usr/local/mysql --datadir=/usr/local/mysql/data [root@node1 ~]# echo $? 0

9、將MySQL添加為系統服務

[root@node1 ~]# cp /usr/local/mysql/support-files/mysql.server /usr/local/mysql/bin/mysql.sh [root@node1 ~]# chmod +x /usr/local/mysql/bin/mysql.sh [root@node1 ~]# cat > /usr/lib/systemd/system/mysql.service << EOF [Unit] Description=MySQL After=network.target [Service] User=mysql Group=mysql Type=forking PrivateTmp=false LimitNOFILE=65535 ExecStart=/usr/local/mysql/bin/mysql.sh start ExecStop=/usr/local/mysql/bin/mysql.sh stop [Install] WantedBy=multi-user.target EOF

10、啟動MySQL並為root用戶設置密碼

[root@node1 ~]# systemctl start mysql [root@node1 ~]# systemctl enable mysql [root@node1 ~]# netstat -anput | grep mysql tcp 0 0 0.0.0.0:3635 0.0.0.0:* LISTEN 34411/mysqld [root@node1 ~]# mysql -e"update mysql.user set authentication_string=password('abc-123') where user='root';flush privileges;"

4)部署主從複製

1、創建相應的用戶

提示:下面創建的用戶依次為:程式連接用戶、MHA管理用戶、中繼日誌清理用戶、主從複製用戶、這些用戶需要在所有的MySQL節點上創建

[root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"grant all privileges on *.* to 'mds'@'10.2.3.%' identified by 'abc-123';" [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"grant all privileges on *.* to 'mha'@'10.2.3.%' identified by 'abc-123';" [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"grant all privileges on *.* to 'purge'@'127.0.0.1' identified by 'abc-123';" [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"grant replication slave on *.* to 'slave'@'10.2.3.%' identified by 'abc-123';"

2、複製對應的命令

提示:因為在檢查與轉移的過程中需要調用MySQL的命令、所以這裡需要將其複製到對應的地方、這些命令也需要在所有的MySQL節點上執行

[root@node1 ~]# cp /usr/local/mysql/bin/mysql /usr/local/bin/ [root@node1 ~]# cp /usr/local/mysql/bin/mysqlbinlog /usr/local/bin/ [root@node1 ~]# cp /usr/local/mysql/bin/mysqladmin /usr/local/bin/

3、定義Master 的配置文件

server-id = 11 sync_binlog = 0 expire_logs_days = 6 max_binlog_size = 512M max_binlog_cache_size = 8M binlog_format = row log-slave-updates = true log_bin = /usr/local/mysql/binlog/mysql-bin log_bin_index = /usr/local/mysql/binlog/mysql-bin.index

配置完成以後重啟MySQL、並查看相應的參數、因為後面的Slave節點需要用到

[root@node1 ~]# systemctl restart mysql [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"show master status;" +------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +------------------+----------+--------------+------------------+-------------------+ | mysql-bin.000001 | 154 | | mysql | | +------------------+----------+--------------+------------------+-------------------+

4、定義Slave-1 的配置文件

server-id = 12 sync_binlog = 0 expire_logs_days = 6 max_binlog_size = 512M max_binlog_cache_size = 8M binlog_format = row log-slave-updates = true log_bin = /usr/local/mysql/binlog/mysql-bin log_bin_index = /usr/local/mysql/binlog/mysql-bin.index sync_relay_log = 0 sync_relay_log_info = 0 relay_log_purge = OFF relay_log_recovery = ON max_relay_log_size = 512M master_info_repository = table relay_log_info_repository = table relay-log = /usr/local/mysql/relaylog/mysql-relay relay-log-index = /usr/local/mysql/relaylog/mysql-relay.index

5、定義Slave-2 的配置文件

server-id = 13 sync_binlog = 0 expire_logs_days = 6 max_binlog_size = 512M max_binlog_cache_size = 8M binlog_format = row log-slave-updates = true log_bin = /usr/local/mysql/binlog/mysql-bin log_bin_index = /usr/local/mysql/binlog/mysql-bin.index sync_relay_log = 0 sync_relay_log_info = 0 relay_log_purge = OFF relay_log_recovery = ON max_relay_log_size = 512M master_info_repository = table relay_log_info_repository = table relay-log = /usr/local/mysql/relaylog/mysql-relay relay-log-index = /usr/local/mysql/relaylog/mysql-relay.index

6、定義Slave-3 的配置文件

server-id = 14 sync_binlog = 0 expire_logs_days = 6 max_binlog_size = 512M max_binlog_cache_size = 8M binlog_format = row log-slave-updates = true log_bin = /usr/local/mysql/binlog/mysql-bin log_bin_index = /usr/local/mysql/binlog/mysql-bin.index sync_relay_log = 0 sync_relay_log_info = 0 relay_log_purge = OFF relay_log_recovery = ON max_relay_log_size = 512M master_info_repository = table relay_log_info_repository = table relay-log = /usr/local/mysql/relaylog/mysql-relay relay-log-index = /usr/local/mysql/relaylog/mysql-relay.index

7、配置完成以後重啟服務並向Master進行同步

[root@node2 ~]# systemctl restart mysql [root@node2 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"change master to master_host='10.2.3.11',master_port=3635,master_user='slave',master_password='abc-123',master_log_file='mysql-bin.000001',master_log_pos=154;" [root@node2 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"start slave;" [root@node2 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"show slave statusG" | head -13 *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 10.2.3.11 Master_User: slave Master_Port: 3635 Connect_Retry: 60 Master_Log_File: mysql-bin.000001 Read_Master_Log_Pos: 1017 Relay_Log_File: mysql-relay.000002 Relay_Log_Pos: 1183 Relay_Master_Log_File: mysql-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes

5)部署Haproxy

1、下載軟體包

[root@haproxy ~]# wget -c http://www.haproxy.org/download/1.5/src/haproxy-1.5.19.tar.gz

2、創建運行用戶並安裝依賴包

[root@haproxy ~]# useradd -M -s /sbin/nologin ha [root@haproxy ~]# yum -y install bzip2-devel pcre-devel zlib-devel gcc gcc-c++ openssl openssl-devel

3、編譯安裝Haproxy

[root@haproxy ~]# tar xf haproxy-1.5.19.tar.gz -C /usr/src/ [root@haproxy ~]# cd /usr/src/haproxy-1.5.19/ [root@haproxy haproxy-1.5.19]# make -j 8 TARGET=linux2628 ARCH=x86_64 PREFIX=/usr/local/haproxy [root@haproxy haproxy-1.5.19]# make install PREFIX=/usr/local/haproxy && cd

4、創建相應的存儲目錄並複製模板文件

[root@haproxy ~]# mkdir /usr/local/haproxy/pid [root@haproxy ~]# mkdir /usr/local/haproxy/conf [root@haproxy ~]# [root@haproxy ~]# cp /usr/src/haproxy-1.5.19/examples/haproxy.cfg /usr/local/haproxy/conf/ [root@haproxy ~]# cp /usr/local/haproxy/conf/haproxy.cfg /usr/local/haproxy/conf/haproxy.cfg.bak

5、定義Haproxy配置文件

提示1:底部的三台節點均為Slave、這樣才可達到讀負載均衡的效果、演算法定義輪詢、而類型一定要為TCP

提示2:如果不對日誌進行切割、那麼在Haproxy的配置文件當中建議將日誌選項去掉、否則硬碟會被撐爆

[root@haproxy ~]# vim /usr/local/haproxy/conf/haproxy.cfg global daemon uid 1000 gid 1000 nbproc 1 maxconn 6500 ulimit-n 65535 pidfile /usr/local/haproxy/pid/haproxy.pid defaults log global mode tcp retries 3 timeout connect 3s timeout client 60s timeout server 60s option redispatch option abortonclose listen status bind 10.2.3.15:80 mode http log global stats refresh 3s stats uri /mds stats hide-version stats auth mds:mds-123 stats realm Welcome to Haproxy Manager Page listen ms1 bind 10.2.3.15:9999 mode tcp log global balance roundrobin server Slave1 10.2.3.12:3635 check inter 3000 rise 2 fall 3 weight 3 server Slave2 10.2.3.13:3635 check inter 3000 rise 2 fall 3 weight 3 server Slave3 10.2.3.14:3635 check inter 3000 rise 2 fall 3 weight 3

6、將Haproxy添加為系統服務

[root@haproxy ~]# cat >> /usr/lib/systemd/system/haproxy.service << EOF [Unit] Description=Haproxy After=network.target [Service] LimitNOFILE=65535 Type=forking ExecStart=/usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/conf/haproxy.cfg [Install] WantedBy=multi-user.target EOF

7、啟動Haproxy並將其設置為開啟自啟

[root@haproxy ~]# systemctl start haproxy [root@haproxy ~]# systemctl status haproxy ● haproxy.service - Haproxy Loaded: loaded (/usr/lib/systemd/system/haproxy.service; enabled; vendor preset: disabled) Active: active (running) since 三 2020-01-15 15:00:54 CST; 2min 29s ago Main PID: 1359 (haproxy) CGroup: /system.slice/haproxy.service └─1359 /usr/local/haproxy/sbin/haproxy -f /usr/local/haproxy/conf/haproxy.cfg 1月 15 15:00:54 haproxy systemd[1]: Starting Haproxy... 1月 15 15:00:54 haproxy systemd[1]: Started Haproxy. [root@haproxy ~]# systemctl enable haproxy [root@haproxy ~]# netstat -anput | grep haproxy tcp 0 0 10.2.3.15:9999 0.0.0.0:* LISTEN 1359/haproxy tcp 0 0 10.2.3.15:80 0.0.0.0:* LISTEN 1359/haproxy

8、在客戶端測試連接Haproxy

注意:連接所使用的用戶為程式連接用戶、這個用戶我們在上面已經創建過了、因為要做讀的負載均衡所以每台MySQL節點上都要有這個用戶

[root@client ~]# mysql -umds -pabc-123 -h10.2.3.15 -P9999 Welcome to the MariaDB monitor. Commands end with ; or g. Your MySQL connection id is 11 Server version: 5.7.27-log Source distribution Copyright (c) 2000, 2018, Oracle, MariaDB Corporation Ab and others. Type 'help;' or 'h' for help. Type 'c' to clear the current input statement. MySQL [(none)]> show databases; +--------------------+ | Database | +--------------------+ | information_schema | | mysql | | performance_schema | | sys | +--------------------+ 4 rows in set (0.02 sec) MySQL [(none)]>

9、查看Haproxy建立連接的埠

如下所示我們可以看到、Haproxy與一台客戶端進行了TCP連接、在這個時候也與一台MySQL進行了TCP連接、10.2.3.14為MySQL節點、10.2.3.16為測試客戶端

[root@haproxy ~]# netstat -anput | grep haproxy tcp 0 0 10.2.3.15:9999 0.0.0.0:* LISTEN 1359/haproxy tcp 0 0 10.2.3.15:80 0.0.0.0:* LISTEN 1359/haproxy tcp 0 0 10.2.3.15:9999 10.2.3.16:59796 ESTABLISHED 1359/haproxy tcp 0 0 10.2.3.15:14001 10.2.3.14:3635 ESTABLISHED 1359/haproxy

10、查看MySQL建立連接的埠

10.2.3.15為Haproxy伺服器、可以看到MySQL與其進行了連接、但這並不是固定的、因為演算法選擇了輪詢、所以如果後面還有客戶端連接、那Haproxy就不一定是與這台MySQL進行連接了、有可能是10.2.3.14

[root@node4 ~]# netstat -anput | grep mysql tcp 0 0 0.0.0.0:3635 0.0.0.0:* LISTEN 3816/mysqld tcp 0 0 10.2.3.14:3635 10.2.3.15:14001 ESTABLISHED 3816/mysqld tcp 0 0 10.2.3.14:7489 10.2.3.11:3635 ESTABLISHED 3816/mysqld

主從複製也有了、負載均衡也有了、下面就來看一下高可用吧!

6)部署 MHA

1、配置免密登錄

在MHA-Manager上執行:

[root@manager ~]# ssh-keygen -t dsa > /dev/null [root@manager ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.11 [root@manager ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.12 [root@manager ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.13 [root@manager ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.14

在Master上執行:

[root@node1 ~]# ssh-keygen -t dsa > /dev/null [root@node1 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.12 [root@node1 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.13 [root@node1 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.14

在Slave-1上執行:

[root@node2 ~]# ssh-keygen -t dsa > /dev/null [root@node2 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.11 [root@node2 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.13 [root@node2 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.14

在Slave-2上執行:

[root@node3 ~]# ssh-keygen -t dsa > /dev/null [root@node3 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.11 [root@node3 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.12 [root@node3 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.14

在Slave-3上執行:

[root@node4 ~]# ssh-keygen -t dsa > /dev/null [root@node4 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.11 [root@node4 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.12 [root@node4 ~]# ssh-copy-id -i .ssh/id_dsa.pub -p 22 10.2.3.13

2、安裝依賴包

注意:除Haproxy節點以外所有節點都要安裝

[root@node1 ~]# yum -y install epel* [root@node1 ~]# yum makecache [root@node1 ~]# yum install -y perl-CPAN perl-ExtUtils-CBuilder perl-ExtUtils-MakeMaker perl-DBD-MySQL perl-Config-Tiny perl-Log-Dispatch perl-Parallel-ForkManager perl-Config-IniFiles perl-Time-HiRes

3、部署 MHA-Node

注意:MHA-Node需要在所有MySQL節點上安裝、也包括Manager節點

[root@manager ~]# tar xf mha-mysql-node-0.57.tar.gz -C /usr/src/ [root@manager ~]# cd /usr/src/mha4mysql-node-0.57/ [root@manager mha4mysql-node-0.57]# perl Makefile.PL [root@manager mha4mysql-node-0.57]# make -j 8 && make install -j 8 && cd

4、部署 MHA-Manager

注意:MHA-Manager 只需在Manager節點安裝即可

[root@manager ~]# tar xf mha-mysql-manager-0.57.tar.gz -C /usr/src/ [root@manager ~]# cd /usr/src/mha4mysql-manager-0.57/ [root@manager mha4mysql-manager-0.57]# perl Makefile.PL [root@manager mha4mysql-manager-0.57]# make -j 8 && make install -j 8 && cd

5、創建對應的存儲目錄

[root@manager ~]# mkdir /etc/mha [root@manager ~]# mkdir /etc/mha/logs [root@manager ~]# mkdir /etc/mha/conf [root@manager ~]# mkdir /etc/mha/script [root@manager ~]# cp /usr/src/mha4mysql-manager-0.57/samples/conf/app1.cnf /etc/mha/conf/ [root@manager ~]# mv /etc/mha/conf/app1.cnf /etc/mha/conf/ms1.conf

6、添加虛擬地址

注意:只需在Master節點上添加即可、這個虛擬地址無需固定、因為在進行故障轉移的時候這個地址需要移動

[root@node1 ~]# ifconfig ens33:1 10.2.3.30 [root@node1 ~]# ifconfig ens33:1 ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500 inet 10.2.3.30 netmask 255.0.0.0 broadcast 10.255.255.255 ether 00:0c:29:44:ef:f3 txqueuelen 1000 (Ethernet)

7、編寫故障轉移腳本

注意1:在以下腳本中定義了虛擬IP、那麼這個虛擬IP在Master節點上就要有

注意2:如果所有MySQL節點的SSH埠不同、那麼就需要在底部的兩段函數當中使用 -p 選項來指定摘除VIP主機的SSH埠與添加VIP主機的SSH埠

注意3:如果所有MySQL節點的SSH埠不同、那麼就需要在故障轉移腳本當中明確指定那台Slave為故障轉移之後是新的Master、也就是指定添加VIP主機的SSH埠

[root@manager ~]# vim /etc/mha/script/ms1_ip_failover

#!/usr/bin/env perl #ChangeTime:2020/1/15 use strict; use warnings FATAL => 'all'; use Getopt::Long; my ( $command, $ssh_user, $orig_master_host, $orig_master_ip, $orig_master_port, $new_master_host, $new_master_ip, $new_master_port, ); #=================================================================== my $vip = '10.2.3.30/24'; my $key = '1'; my $ssh_start_vip = "ifconfig ens33:$key $vip"; my $ssh_stop_vip = "ifconfig ens33:$key down"; #=================================================================== GetOptions( 'command=s' => $command, 'ssh_user=s' => $ssh_user, 'orig_master_host=s' => $orig_master_host, 'orig_master_ip=s' => $orig_master_ip, 'orig_master_port=i' => $orig_master_port, 'new_master_host=s' => $new_master_host, 'new_master_ip=s' => $new_master_ip, 'new_master_port=i' => $new_master_port, ); exit &main(); sub main { print "nnIN Script Test====$ssh_stop_vip==$ssh_start_vip===nn"; if ( $command eq "stop" || $command eq "stopssh" ) { my $exit_code = 1; eval { print "Disabling the VIP on old Master: $orig_master_host n"; &stop_vip(); $exit_code = 0; }; if ($@) { warn "Got Error: $@n"; exit $exit_code; } exit $exit_code; } elsif ( $command eq "start" ) { my $exit_code = 10; eval { print "Enabling The VIP - $vip on The New Master - $new_master_host n"; &start_vip(); $exit_code = 0; }; if ($@) { warn $@; exit $exit_code; } exit $exit_code; } elsif ( $command eq "status" ) { print "Checking The Status of The Script.. OK n"; exit 0; } else { exit 1; } } #==================================================================== #Remove VIP sub stop_vip() { `ssh -p 22 $ssh_user@$orig_master_host " $ssh_stop_vip "`; } #==================================================================== #Add VIP sub start_vip() { `ssh -p 22 $ssh_user@$new_master_host " $ssh_start_vip "`; } #====================================================================

注意:寫完腳本之後別忘了給其賦予執行許可權、命令為:chmod +x /etc/mha/script/ms1_ip_failover

8、定義MHA配置文件

注意1:在部署MHA的時候、一套主從複製組最好為1主3從、因為其中1台Master出現了異常、切換完成以後還有2兩台節點可讀

注意2:因為MySQL節點的SSH埠都不同、所以需要將其中的一台節點設置為 no_master = 1、即此節點永遠不會成為主、這樣定義是為了防止在切換的時候SSH連接不上或者轉移錯誤、從而導致VIP不進行漂移

[root@manager ~]# vim /etc/mha/conf/ms1.conf [server default] remote_workdir = /opt manager_workdir = /etc/mha/conf manager_log = /etc/mha/logs/ms1.log master_ip_failover_script = /etc/mha/script/ms1_ip_failover user = mha password = abc-123 repl_user = slave repl_password = abc-123 ping_type = connect ping_interval = 3 [server1] ssh_user = root ssh_port = 22 hostname = 10.2.3.11 port = 3635 master_binlog_dir = /usr/local/mysql/binlog [server2] ssh_user = root ssh_port = 22 hostname = 10.2.3.12 port = 3635 candidate_master = 1 check_repl_delay = 0 master_binlog_dir = /usr/local/mysql/binlog [server3] ssh_user = root ssh_port = 22 hostname = 10.2.3.13 port = 3635 master_binlog_dir = /usr/local/mysql/binlog [server4] ssh_user = root ssh_port = 22 hostname = 10.2.3.14 port = 3635 no_master = 1 master_binlog_dir = /usr/local/mysql/binlog

9、檢查免密登錄

[root@manager ~]# masterha_check_ssh --conf=/etc/mha/conf/ms1.conf Wed Jan 15 16:12:44 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Wed Jan 15 16:12:44 2020 - [info] Reading application default configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:12:44 2020 - [info] Reading server configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:12:44 2020 - [info] Starting SSH connection tests.. Wed Jan 15 16:12:45 2020 - [debug] Wed Jan 15 16:12:44 2020 - [debug] Connecting via SSH from [email protected](10.2.3.11:22) to [email protected](10.2.3.12:22).. Wed Jan 15 16:12:44 2020 - [debug] ok. Wed Jan 15 16:12:44 2020 - [debug] Connecting via SSH from [email protected](10.2.3.11:22) to [email protected](10.2.3.13:22).. Wed Jan 15 16:12:45 2020 - [debug] ok. Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.11:22) to [email protected](10.2.3.14:22).. Wed Jan 15 16:12:45 2020 - [debug] ok. Wed Jan 15 16:12:46 2020 - [debug] Wed Jan 15 16:12:44 2020 - [debug] Connecting via SSH from [email protected](10.2.3.12:22) to [email protected](10.2.3.11:22).. Wed Jan 15 16:12:45 2020 - [debug] ok. Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.12:22) to [email protected](10.2.3.13:22).. Wed Jan 15 16:12:45 2020 - [debug] ok. Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.12:22) to [email protected](10.2.3.14:22).. Wed Jan 15 16:12:46 2020 - [debug] ok. Wed Jan 15 16:12:47 2020 - [debug] Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.14:22) to [email protected](10.2.3.11:22).. Wed Jan 15 16:12:46 2020 - [debug] ok. Wed Jan 15 16:12:46 2020 - [debug] Connecting via SSH from [email protected](10.2.3.14:22) to [email protected](10.2.3.12:22).. Wed Jan 15 16:12:46 2020 - [debug] ok. Wed Jan 15 16:12:46 2020 - [debug] Connecting via SSH from [email protected](10.2.3.14:22) to [email protected](10.2.3.13:22).. Wed Jan 15 16:12:47 2020 - [debug] ok. Wed Jan 15 16:12:47 2020 - [debug] Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.13:22) to [email protected](10.2.3.11:22).. Wed Jan 15 16:12:45 2020 - [debug] ok. Wed Jan 15 16:12:45 2020 - [debug] Connecting via SSH from [email protected](10.2.3.13:22) to [email protected](10.2.3.12:22).. Wed Jan 15 16:12:46 2020 - [debug] ok. Wed Jan 15 16:12:46 2020 - [debug] Connecting via SSH from [email protected](10.2.3.13:22) to [email protected](10.2.3.14:22).. Wed Jan 15 16:12:46 2020 - [debug] ok. Wed Jan 15 16:12:47 2020 - [info] All SSH connection tests passed successfully.

10、檢查主從複製

[root@manager ~]# masterha_check_repl --conf=/etc/mha/conf/ms1.conf Wed Jan 15 16:54:02 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Wed Jan 15 16:54:02 2020 - [info] Reading application default configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:54:02 2020 - [info] Reading server configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:54:02 2020 - [info] MHA::MasterMonitor version 0.57. Wed Jan 15 16:54:04 2020 - [info] GTID failover mode = 0 Wed Jan 15 16:54:04 2020 - [info] Dead Servers: Wed Jan 15 16:54:04 2020 - [info] Alive Servers: Wed Jan 15 16:54:04 2020 - [info] 10.2.3.11(10.2.3.11:3635) Wed Jan 15 16:54:04 2020 - [info] 10.2.3.12(10.2.3.12:3635) Wed Jan 15 16:54:04 2020 - [info] 10.2.3.13(10.2.3.13:3635) Wed Jan 15 16:54:04 2020 - [info] 10.2.3.14(10.2.3.14:3635) Wed Jan 15 16:54:04 2020 - [info] Alive Slaves: Wed Jan 15 16:54:04 2020 - [info] 10.2.3.12(10.2.3.12:3635) Version=5.7.27-log (oldest major version between slaves) log-bin:enabled Wed Jan 15 16:54:04 2020 - [info] Replicating from 10.2.3.11(10.2.3.11:3635) Wed Jan 15 16:54:04 2020 - [info] Primary candidate for the new Master (candidate_master is set) Wed Jan 15 16:54:04 2020 - [info] 10.2.3.13(10.2.3.13:3635) Version=5.7.27-log (oldest major version between slaves) log-bin:enabled Wed Jan 15 16:54:04 2020 - [info] Replicating from 10.2.3.11(10.2.3.11:3635) Wed Jan 15 16:54:04 2020 - [info] 10.2.3.14(10.2.3.14:3635) Version=5.7.27-log (oldest major version between slaves) log-bin:enabled Wed Jan 15 16:54:04 2020 - [info] Replicating from 10.2.3.11(10.2.3.11:3635) Wed Jan 15 16:54:04 2020 - [info] Not candidate for the new Master (no_master is set) Wed Jan 15 16:54:04 2020 - [info] Current Alive Master: 10.2.3.11(10.2.3.11:3635) Wed Jan 15 16:54:04 2020 - [info] Checking slave configurations.. Wed Jan 15 16:54:04 2020 - [info] read_only=1 is not set on slave 10.2.3.12(10.2.3.12:3635). Wed Jan 15 16:54:04 2020 - [info] read_only=1 is not set on slave 10.2.3.13(10.2.3.13:3635). Wed Jan 15 16:54:04 2020 - [info] read_only=1 is not set on slave 10.2.3.14(10.2.3.14:3635). Wed Jan 15 16:54:04 2020 - [info] Checking replication filtering settings.. Wed Jan 15 16:54:04 2020 - [info] binlog_do_db= , binlog_ignore_db= mysql Wed Jan 15 16:54:04 2020 - [info] Replication filtering check ok. Wed Jan 15 16:54:04 2020 - [info] GTID (with auto-pos) is not supported Wed Jan 15 16:54:04 2020 - [info] Starting SSH connection tests.. Wed Jan 15 16:54:07 2020 - [info] All SSH connection tests passed successfully. Wed Jan 15 16:54:07 2020 - [info] Checking MHA Node version.. Wed Jan 15 16:54:08 2020 - [info] Version check ok. Wed Jan 15 16:54:08 2020 - [info] Checking SSH publickey authentication settings on the current master.. Wed Jan 15 16:54:08 2020 - [info] HealthCheck: SSH to 10.2.3.11 is reachable. Wed Jan 15 16:54:08 2020 - [info] Master MHA Node version is 0.57. Wed Jan 15 16:54:08 2020 - [info] Checking recovery script configurations on 10.2.3.11(10.2.3.11:3635).. Wed Jan 15 16:54:08 2020 - [info] Executing command: save_binary_logs --command=test --start_pos=4 --binlog_dir=/usr/local/mysql/binlog --output_file=/opt/save_binary_logs_test --manager_version=0.57 --start_file=mysql-bin.000002 Wed Jan 15 16:54:08 2020 - [info] Connecting to [email protected](10.2.3.11:22).. Creating /opt if not exists.. ok. Checking output directory is accessible or not.. ok. Binlog found at /usr/local/mysql/binlog, up to mysql-bin.000002 Wed Jan 15 16:54:09 2020 - [info] Binlog setting check done. Wed Jan 15 16:54:09 2020 - [info] Checking SSH publickey authentication and checking recovery script configurations on all alive slave servers.. Wed Jan 15 16:54:09 2020 - [info] Executing command : apply_diff_relay_logs --command=test --slave_user='mha' --slave_host=10.2.3.12 --slave_ip=10.2.3.12 --slave_port=3635 --workdir=/opt --target_version=5.7.27-log --manager_version=0.57 --relay_dir=/usr/local/mysql/relaylog --current_relay_log=mysql-relay.000001 --slave_pass=xxx Wed Jan 15 16:54:09 2020 - [info] Connecting to [email protected](10.2.3.12:22).. Checking slave recovery environment settings.. Relay log found at /usr/local/mysql/relaylog, up to mysql-relay.000004 Temporary relay log file is /usr/local/mysql/relaylog/mysql-relay.000004 Testing mysql connection and privileges..mysql: [Warning] Using a password on the command line interface can be insecure. done. Testing mysqlbinlog output.. done. Cleaning up test file(s).. done. Wed Jan 15 16:54:09 2020 - [info] Executing command : apply_diff_relay_logs --command=test --slave_user='mha' --slave_host=10.2.3.13 --slave_ip=10.2.3.13 --slave_port=3635 --workdir=/opt --target_version=5.7.27-log --manager_version=0.57 --relay_dir=/usr/local/mysql/relaylog --current_relay_log=mysql-relay.000001 --slave_pass=xxx Wed Jan 15 16:54:09 2020 - [info] Connecting to [email protected](10.2.3.13:22).. Checking slave recovery environment settings.. Relay log found at /usr/local/mysql/relaylog, up to mysql-relay.000004 Temporary relay log file is /usr/local/mysql/relaylog/mysql-relay.000004 Testing mysql connection and privileges..mysql: [Warning] Using a password on the command line interface can be insecure. done. Testing mysqlbinlog output.. done. Cleaning up test file(s).. done. Wed Jan 15 16:54:10 2020 - [info] Executing command : apply_diff_relay_logs --command=test --slave_user='mha' --slave_host=10.2.3.14 --slave_ip=10.2.3.14 --slave_port=3635 --workdir=/opt --target_version=5.7.27-log --manager_version=0.57 --relay_dir=/usr/local/mysql/relaylog --current_relay_log=mysql-relay.000003 --slave_pass=xxx Wed Jan 15 16:54:10 2020 - [info] Connecting to [email protected](10.2.3.14:22).. Checking slave recovery environment settings.. Relay log found at /usr/local/mysql/relaylog, up to mysql-relay.000006 Temporary relay log file is /usr/local/mysql/relaylog/mysql-relay.000006 Testing mysql connection and privileges.. done. Testing mysqlbinlog output..mysql: [Warning] Using a password on the command line interface can be insecure. done. Cleaning up test file(s).. done. Wed Jan 15 16:54:10 2020 - [info] Slaves settings check done. Wed Jan 15 16:54:10 2020 - [info] 10.2.3.11(10.2.3.11:3635) (current master) +--10.2.3.12(10.2.3.12:3635) +--10.2.3.13(10.2.3.13:3635) +--10.2.3.14(10.2.3.14:3635) Wed Jan 15 16:54:10 2020 - [info] Checking replication health on 10.2.3.12.. Wed Jan 15 16:54:10 2020 - [info] ok. Wed Jan 15 16:54:10 2020 - [info] Checking replication health on 10.2.3.13.. Wed Jan 15 16:54:10 2020 - [info] ok. Wed Jan 15 16:54:10 2020 - [info] Checking replication health on 10.2.3.14.. Wed Jan 15 16:54:10 2020 - [info] ok. Wed Jan 15 16:54:10 2020 - [info] Checking master_ip_failover_script status: Wed Jan 15 16:54:10 2020 - [info] /etc/mha/script/ms1_ip_failover --command=status --ssh_user=root --orig_master_host=10.2.3.11 --orig_master_ip=10.2.3.11 --orig_master_port=3635 IN Script Test====ifconfig ens33:1 down==ifconfig ens33:1 10.2.3.30/24=== Checking The Status of The Script.. OK Wed Jan 15 16:54:10 2020 - [info] OK. Wed Jan 15 16:54:10 2020 - [warning] shutdown_script is not defined. Wed Jan 15 16:54:10 2020 - [info] Got exit code 0 (Not master dead). MySQL Replication Health is OK.

11、開啟Manager監控

[root@manager ~]# nohup masterha_manager --conf=/etc/mha/conf/ms1.conf --ignore_last_failover > /dev/null & [1] 21271 [root@manager ~]# Wed Jan 15 16:55:22 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Wed Jan 15 16:55:22 2020 - [info] Reading application default configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:55:22 2020 - [info] Reading server configuration from /etc/mha/conf/ms1.conf.. [root@manager ~]# netstat -anput | grep perl tcp 0 0 10.2.3.16:17399 10.2.3.11:3635 ESTABLISHED 2269/perl [root@manager ~]# tail -f /etc/mha/logs/ms1.log IN Script Test====ifconfig ens33:1 down==ifconfig ens33:1 10.2.3.30/24=== Checking The Status of The Script.. OK Wed Jan 15 16:55:30 2020 - [info] OK. Wed Jan 15 16:55:30 2020 - [warning] shutdown_script is not defined. Wed Jan 15 16:55:30 2020 - [info] Set master ping interval 3 seconds. Wed Jan 15 16:55:30 2020 - [info] Starting ping health check on 10.2.3.11(10.2.3.11:3635).. Wed Jan 15 16:55:30 2020 - [info] Ping(CONNECT) succeeded, waiting until MySQL doesn't respond..

12、Master狀態檢查

[root@manager ~]# masterha_check_status --conf=/etc/mha/conf/ms1.conf ms1 (pid:2198) is running(0:PING_OK), master:10.2.3.11

13、關閉Manager監控

[root@manager ~]# masterha_stop --conf=/etc/mha/conf/ms1.conf Stopped ms1 successfully. [1]+ 退出 1 masterha_manager --conf=/etc/mha/conf/ms1.conf --ignore_last_failover > /dev/null

14、中繼日誌清理

注意:清理中繼日誌的命令為MHA-Node自帶的 purge_relay_logs、這條命令只支援在127.0.0.1或者localhost上運行

如下所示:所有的MySQL節點都已經和Manager節點進行了免密、所以可以在Manager節點上使用SSH命令遠程執行

[root@manager ~]# ssh -p 22 10.2.3.14 purge_relay_logs --user=purge --password=abc-123 --port=3635 2020-01-15 16:29:09: purge_relay_logs script started. Opening /usr/local/mysql/relaylog/mysql-relay.000001 .. Opening /usr/local/mysql/relaylog/mysql-relay.000002 .. Executing SET GLOBAL relay_log_purge=1; FLUSH LOGS; sleeping a few seconds so that SQL thread can delete older relay log files (if it keeps up); SET GLOBAL relay_log_purge=0; .. ok. 2020-01-15 16:29:12: All relay log purging operations succeeded.

沒有清理之前、Slave上的中繼日誌如下所示:

[root@node4 ~]# ll /usr/local/mysql/relaylog/ 總用量 12 -rw-r----- 1 mysql mysql 203 1月 15 14:35 mysql-relay.000001 -rw-r----- 1 mysql mysql 1183 1月 15 14:35 mysql-relay.000002 -rw-r----- 1 mysql mysql 90 1月 15 14:35 mysql-relay.index

清理完成之後、Slave上的中繼日誌如下所示:

[root@node4 ~]# ll /usr/local/mysql/relaylog/ 總用量 12 -rw-r----- 1 mysql mysql 1232 1月 15 16:29 mysql-relay.000002 -rw-r----- 1 mysql mysql 273 1月 15 16:29 mysql-relay.000003 -rw-r----- 1 mysql mysql 90 1月 15 16:29 mysql-relay.index

在生產環境中可能會產生大量的中繼日誌、所以我們可以使用腳本並結合任務計劃來定時清理中繼日誌、如下所示:每3小時清理一次中繼日誌

[root@manager ~]# vim /root/relaylog_clear.sh #!/bin/bash #定義遠程節點的SSH埠與地址 MY_SSH="22" MY_ADDR="10.2.3.14" #定義遠程節點的MySQL用戶與密碼等資訊、底部為清除中繼日誌的命令 MY_USER="purge" MY_PASS="abc-123" MY_HOST="127.0.0.1" MY_PORT="3635" MHA_CMD="purge_relay_logs" #執行清除命令 ssh -p $MY_SSH $MY_ADDR $MHA_CMD --user=$MY_USER --password=$MY_PASS --host=$MY_HOST --port=$MY_PORT >> /etc/mha/logs/relaylog_clear.log &

編寫完腳本之後我們需要為其授予執行許可權、並添加到任務計劃當中定時運行

[root@manager ~]# chmod +x /root/relaylog_clear.sh [root@manager ~]# crontab -e * */3 * * * bash /root/relaylog_clear.sh

二、MySQL+MHA+Haproxy 測試

1)在Master上停掉MySQL服務

[root@node1 ~]# systemctl stop mysql [root@node1 ~]# netstat -anput | grep mysql [root@node1 ~]# ps -elf | grep -v grep | grep mysql

2)在Manager上查看日誌(故障轉移報告)

[root@manager ~]# tail -f /etc/mha/logs/ms1.log ----- Failover Report ----- ms1: MySQL Master failover 10.2.3.11(10.2.3.11:3635) to 10.2.3.12(10.2.3.12:3635) succeeded Master 10.2.3.11(10.2.3.11:3635) is down! Check MHA Manager logs at manager:/etc/mha/logs/ms1.log for details. Started automated(non-interactive) failover. Invalidated master IP address on 10.2.3.11(10.2.3.11:3635) The latest slave 10.2.3.12(10.2.3.12:3635) has all relay logs for recovery. Selected 10.2.3.12(10.2.3.12:3635) as a new master. 10.2.3.12(10.2.3.12:3635): OK: Applying all logs succeeded. 10.2.3.12(10.2.3.12:3635): OK: Activated master IP address. 10.2.3.13(10.2.3.13:3635): This host has the latest relay log events. 10.2.3.14(10.2.3.14:3635): This host has the latest relay log events. Generating relay diff files from the latest slave succeeded. 10.2.3.14(10.2.3.14:3635): OK: Applying all logs succeeded. Slave started, replicating from 10.2.3.12(10.2.3.12:3635) 10.2.3.13(10.2.3.13:3635): OK: Applying all logs succeeded. Slave started, replicating from 10.2.3.12(10.2.3.12:3635) 10.2.3.12(10.2.3.12:3635): Resetting slave info succeeded. Master failover to 10.2.3.12(10.2.3.12:3635) completed successfully.

這個時候Manager進程就會退出、並會在conf目錄生成一個名為 ms1.failover.complete 的文件、如果下次還需開啟Manager監控、則需要將其刪除

[root@manager ~]# Wed Jan 15 16:57:28 2020 - [warning] Global configuration file /etc/masterha_default.cnf not found. Skipping. Wed Jan 15 16:57:28 2020 - [info] Reading application default configuration from /etc/mha/conf/ms1.conf.. Wed Jan 15 16:57:28 2020 - [info] Reading server configuration from /etc/mha/conf/ms1.conf.. [1]+ 完成 masterha_manager --conf=/etc/mha/conf/ms1.conf --ignore_last_failover > /dev/null [root@manager ~]# ls -lh /etc/mha/conf/ 總用量 8.0K -rw-r--r-- 1 root root 813 1月 15 16:53 ms1.conf -rw-r--r-- 1 root root 0 1月 15 16:57 ms1.failover.complete -rw-r--r-- 1 root root 177 1月 15 16:57 saved_master_binlog_from_10.2.3.11_3635_20200115165728.binlog

3)在node2(候選Master)上查看漂移過來的虛擬IP

[root@node2 ~]# ifconfig ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500 inet 10.2.3.12 netmask 255.255.255.0 broadcast 10.2.3.255 inet6 fe80::250:56ff:fe20:9efa prefixlen 64 scopeid 0x20<link> ether 00:50:56:20:9e:fa txqueuelen 1000 (Ethernet) RX packets 36630 bytes 34108215 (32.5 MiB) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 19913 bytes 2042497 (1.9 MiB) TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0 ens33:1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500 inet 10.2.3.30 netmask 255.255.255.0 broadcast 10.2.3.255 ether 00:50:56:20:9e:fa txqueuelen 1000 (Ethernet) lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536 inet 127.0.0.1 netmask 255.0.0.0 inet6 ::1 prefixlen 128 scopeid 0x10<host> loop txqueuelen 1000 (Local Loopback) RX packets 207 bytes 16541 (16.1 KiB) RX errors 0 dropped 0 overruns 0 frame 0 TX packets 207 bytes 16541 (16.1 KiB) TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

4)在其它的Slave上查看複製狀態、可以看見它已經將Master重新指向了node2(10.2.3.12)

[root@node3 ~]# mysql -uroot -pabc-123 2> /dev/null -e"show slave statusG" | head -13 *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 10.2.3.12 Master_User: slave Master_Port: 3635 Connect_Retry: 60 Master_Log_File: mysql-bin.000001 Read_Master_Log_Pos: 2169 Relay_Log_File: mysql-relay.000002 Relay_Log_Pos: 320 Relay_Master_Log_File: mysql-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes

5)查看保存的binlog文件

提示:剛剛我們在配置文件當中定義了 remote_workdir = /opt 、現在已經進行故障轉移、這個時候我們就可以看到它保存的binlog了、如下所示

注意:這些保存的文件、是在每台MySQL節點上都有的、無論是Master還是Slave、如果日誌文件很大、那將非常佔用空間、所以進行故障轉移之後一定要注意

[root@node2 ~]# ls -lh /opt/ 總用量 8.0K -rw-r--r-- 1 root root 1002 1月 15 16:57 relay_log_apply_for_10.2.3.12_3635_20200115165728_err.log -rw-r--r-- 1 root root 177 1月 15 16:57 saved_master_binlog_from_10.2.3.11_3635_20200115165728.binlog

6)修復異常的Master、並作為Slave角色納入集群

先重啟MySQL

[root@node1 ~]# systemctl restart mysql [root@node1 ~]# netstat -anput | grep mysql tcp 0 0 0.0.0.0:3635 0.0.0.0:* LISTEN 5516/mysqld

在node2上查看binlog狀態

[root@node2 ~]# mysql -uroot -pabc-123 2> /dev/null -e"show master status;" +------------------+----------+--------------+------------------+-------------------+ | File | Position | Binlog_Do_DB | Binlog_Ignore_DB | Executed_Gtid_Set | +------------------+----------+--------------+------------------+-------------------+ | mysql-bin.000001 | 2169 | | mysql | | +------------------+----------+--------------+------------------+-------------------+

得知狀態以後、即可指向新的Master

[root@node1 ~]# mysql -uroot -pabc-123 2> /dev/null -e"change master to master_host='10.2.3.12',master_port=3635,master_user='slave',master_password='abc-123',master_log_file='mysql-bin.000001',master_log_pos=2169;" [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"start slave;" [root@node1 ~]# mysql -uroot -pabc-123 -P3635 2> /dev/null -e"show slave statusG" | head -13 *************************** 1. row *************************** Slave_IO_State: Waiting for master to send event Master_Host: 10.2.3.12 Master_User: slave Master_Port: 3635 Connect_Retry: 60 Master_Log_File: mysql-bin.000001 Read_Master_Log_Pos: 2169 Relay_Log_File: node1-relay-bin.000002 Relay_Log_Pos: 320 Relay_Master_Log_File: mysql-bin.000001 Slave_IO_Running: Yes Slave_SQL_Running: Yes

三、數據備份

1)全量備份

#!/bin/bash #腳本聲明:MySQL全量備份腳本,所使用的工具為mysqldump #注意事項:備份的時候命名中有db的是備份庫,有tb的是備份表 #更改地方:需要備份的庫,備份文件存儲目錄,資料庫連接資訊,日誌路徑 #定義備份的庫 MY_NAME="mds" #定義時間格式 MY_TIME="$(date +%Y-%m-%d_%H-%M-%S)" #定義備份文件存儲目錄 MY_BACK="/opt/fulldose" ###################### if [[ ! -d "${MY_BACK}" ]] then mkdir ${MY_BACK} fi #定義連接資訊 MY_USER="mds" MY_PASS="abc-123" MY_HOST="10.2.3.14" MY_PORT="3635" #定義備份指令 mysqldump -u${MY_USER} -p${MY_PASS} -h${MY_HOST} -P${MY_PORT} 2> /dev/null -B ${MY_NAME} -F --master-data=2 --single-transaction > ${MY_BACK}/db_${MY_NAME}_${MY_TIME}.sql

2)增量備份

1、在需要備份的MySQL節點上開啟binlog

log_bin = /usr/local/mysql/binlog/mysql-bin log_bin_index = /usr/local/mysql/binlog/mysql-bin.index

2、在需要備份的MySQL節點上編輯rsync配置文件

[root@node4 ~]# vim /etc/rsyncd.conf uid = root gid = root use chroot = yes list = no port = 873 address = 10.2.3.14 hosts allow = 10.2.3.0/24 max connections = 30 pid file = /var/run/rsyncd.pid log file = /var/log/rsyncd.log lock file = /var/run/rsyncd.lock [ms1] path = /usr/local/mysql/binlog/ read only = no auth users = backup secrets file = /etc/backup_user.db dont compress = *.gz *.tgz *.zip *.z *.Z *.rpm *.deb *.bz2

3、在需要備份的MySQL節點上創建備份帳號並定義許可權

[root@node4 ~]# echo "backup:abc-123" > /etc/backup_user.db [root@node4 ~]# chmod 600 /etc/backup_user.db

4、重啟rsync服務

[root@node4 ~]# systemctl restart rsyncd [root@node4 ~]# netstat -anput | grep rsync tcp 0 0 10.2.3.14:873 0.0.0.0:* LISTEN 9289/rsync

5、在備份伺服器上定義免交互登錄

[root@bak ~]# echo "abc-123" > /etc/server.pass [root@bak ~]# chmod 600 /etc/server.pass

6、在備份伺服器上免交互增量備份MySQL數據到/opt目錄

[root@bak ~]# rsync -azt --password-file=/etc/server.pass [email protected]::ms1 /opt

7、在備份伺服器上使用任務計劃定時備份MySQL數據到/opt目錄

[root@bak ~]# crontab -e 30 03 * * * rsync -azt --password-file=/etc/server.pass [email protected]::ms1 /opt

四、MHA問題記錄

At least one of monitoring servers is not reachable from this script. This is likely a network

解決方法:刪除/etc/masterha_default.cnf 這個默認的配置文件、或者剛開始就不要添加進去

Target master's advisory lock is already held by someone. Please check whether you monitor the same master from multiple monitoring processes.

解決方法:在/etc/mha/conf 目錄下、刪除 ms1.master_status.health 這個狀態文件、然後再啟動Manager即可

is dead, but must be alive! Check server settings.

解決方法:如果是一主兩從、那麼就代表還有一台節點沒啟動、因為MHA至少要三台節點

Can't exec "mysqlbinlog": 沒有那個文件或目錄 at /usr/local/share/perl5/MHA/BinlogManager.pm line 106.

解決方法:MHA有可能沒有讀取到變數、只需在各個MySQL節點上添加對應命令的軟連接即可、因為在故障轉移的時候需要調用這些命令

Bareword "FIXME_xxx" not allowed while "strict subs" in use at /usr/local/bin/master_ip_failover line 93.

解決方法:因為虛擬IP會牽扯到其它的軟體或者臨時地址、所以在沒有配置虛擬IP前、需要大家先注釋掉 master_ip_failover_script = /usr/local/bin/master_ip_failover 這個選項

五、測試總結

1、如果所有節點的SSH埠不同、那就需要在切換腳本的函數當中使用 -p 選項來指定遠端節點的SSH埠 2、如果所有節點的SSH埠相同、則可以不用指定、只需在腳本當中定義在Master上添加的VIP地址即可 3、指定SSH埠的時候需要指定兩個、第一個為摘除VIP主機的SSH埠、第二個為添加VIP主機的SSH埠 4、如果所有節點的SSH埠不同、那麼進行一次故障轉移之後、需要再次明確指定摘除VIP主機的SSH埠以及添加VIP主機的SSH埠 5、如果因為某種原因發生了故障轉移、那就需要先在Haproxy的配置文件當中將某個Slave節點注釋掉、因為其中一個可能會是新的Master 6、注釋完成以後先不要著急重啟、先試著修復異常的Master、並在MySQL的配置文件中添加相應的Slave參數 7、修復完成以後將其作為Slave角色並納入集群、最後將它的IP地址添加到Haproxy的配置文件當中以參與讀的負載均衡 8、以上配置都完成以後、即可重啟Haproxy服務、最後在Manager節點上啟動Manager監控新的Master即可 9、如果沒有修復舊的Master、那麼就需要在MHA的配置文件當中將舊Master的配置項注釋、否則在啟動Manager監控的時候會報錯、如果修復了、則可忽略 10、如果所有節點的SSH埠不同、且不在故障轉移腳本當中指定SSH的埠、那麼Master出現異常以後、可以進行切換、但是VIP不會漂移、即新的Master不能添加VIP、舊的Master不能移除VIP

【只是為了打發時間】