【貓狗數據集】使用學習率衰減策略並邊訓練邊測試

- 2020 年 3 月 12 日

- 筆記

數據集下載地址:

鏈接:https://pan.baidu.com/s/1l1AnBgkAAEhh0vI5_loWKw

提取碼:2xq4

創建數據集:https://www.cnblogs.com/xiximayou/p/12398285.html

讀取數據集:https://www.cnblogs.com/xiximayou/p/12422827.html

進行訓練:https://www.cnblogs.com/xiximayou/p/12448300.html

保存模型並繼續進行訓練:https://www.cnblogs.com/xiximayou/p/12452624.html

加載保存的模型並測試:https://www.cnblogs.com/xiximayou/p/12459499.html

劃分驗證集並邊訓練邊驗證:https://www.cnblogs.com/xiximayou/p/12464738.html

epoch、batchsize、step之間的關係:https://www.cnblogs.com/xiximayou/p/12405485.html

一個合適的學習率對網絡的訓練至關重要。學習率太大,會導致梯度在最優解處來回震蕩,甚至無法收斂。學習率太小,將導致網絡的收斂速度較為緩慢。一般而言,都會先採取較大的學習率進行訓練,然後在訓練的過程中不斷衰減學習率。而學習率衰減的方式有很多,這裡我們就只使用簡單的方式。

上一節劃分了驗證集,這節我們要邊訓練邊測試,同時要保存訓練的最後一個epoch模型,以及保存測試準確率最高的那個模型。

首先是學習率衰減策略,這裡展示兩種方式:

scheduler = optim.lr_scheduler.StepLR(optimizer, 80, 0.1) scheduler = optim.lr_scheduler.MultiStepLR(optimizer,[80,160],0.1)

第一種方式是每個80個epoch就將學習率衰減為原來的0.1倍。

第二種方式是在第80和第160個epoch時將學習率衰減為原來的0.1倍

比如說第1個epoch的學習率為0.1,那麼在1-80epoch期間都會使用該學習率,在81-160期間使用0.1×0.1=0.01學習率,在161及以後使用0.01×0.1=0.001學習率

一般而言,會在1/3和2/3處進行學習率衰減,比如有200個epoch,那麼在70、140個epoch上進行學習率衰減。不過也需要視情況而定。

接下來,我們將學習率衰減策略加入到main.py中:

main.py

import sys sys.path.append("/content/drive/My Drive/colab notebooks") from utils import rdata from model import resnet import torch.nn as nn import torch import numpy as np import torchvision import train import torch.optim as optim np.random.seed(0) torch.manual_seed(0) torch.cuda.manual_seed_all(0) torch.backends.cudnn.deterministic = True torch.backends.cudnn.benchmark = True device = torch.device('cuda' if torch.cuda.is_available() else 'cpu') batch_size=128 train_loader,val_loader,test_loader=rdata.load_dataset(batch_size) model =torchvision.models.resnet18(pretrained=False) model.fc = nn.Linear(model.fc.in_features,2,bias=False) model.cuda() #定義訓練的epochs num_epochs=6 #定義學習率 learning_rate=0.01 #定義損失函數 criterion=nn.CrossEntropyLoss() #定義優化方法,簡單起見,就是用帶動量的隨機梯度下降 optimizer = torch.optim.SGD(params=model.parameters(), lr=0.1, momentum=0.9, weight_decay=1*1e-4) scheduler = optim.lr_scheduler.MultiStepLR(optimizer, [2,4], 0.1) print("訓練集有:",len(train_loader.dataset)) #print("驗證集有:",len(val_loader.dataset)) print("測試集有:",len(test_loader.dataset)) def main(): trainer=train.Trainer(criterion,optimizer,model) trainer.loop(num_epochs,train_loader,val_loader,test_loader,scheduler) main()

這裡我們只訓練6個epoch,在第2和第4個epoch進行學習率衰減策略。

train.py

import torch class Trainer: def __init__(self,criterion,optimizer,model): self.criterion=criterion self.optimizer=optimizer self.model=model def get_lr(self): for param_group in self.optimizer.param_groups: return param_group['lr'] def loop(self,num_epochs,train_loader,val_loader,test_loader,scheduler=None,acc1=0.0): self.acc1=acc1 for epoch in range(1,num_epochs+1): lr=self.get_lr() print("epoch:{},lr:{}".format(epoch,lr)) self.train(train_loader,epoch,num_epochs) #self.val(val_loader,epoch,num_epochs) self.test(test_loader,epoch,num_epochs) if scheduler is not None: scheduler.step() def train(self,dataloader,epoch,num_epochs): self.model.train() with torch.enable_grad(): self._iteration_train(dataloader,epoch,num_epochs) def val(self,dataloader,epoch,num_epochs): self.model.eval() with torch.no_grad(): self._iteration_val(dataloader,epoch,num_epochs) def test(self,dataloader,epoch,num_epochs): self.model.eval() with torch.no_grad(): self._iteration_test(dataloader,epoch,num_epochs) def _iteration_train(self,dataloader,epoch,num_epochs): total_step=len(dataloader) tot_loss = 0.0 correct = 0 for i ,(images, labels) in enumerate(dataloader): images = images.cuda() labels = labels.cuda() # Forward pass outputs = self.model(images) _, preds = torch.max(outputs.data,1) loss = self.criterion(outputs, labels) # Backward and optimizer self.optimizer.zero_grad() loss.backward() self.optimizer.step() tot_loss += loss.data if (i+1) % 2 == 0: print('Epoch: [{}/{}], Step: [{}/{}], Loss: {:.4f}' .format(epoch, num_epochs, i+1, total_step, loss.item())) correct += torch.sum(preds == labels.data).to(torch.float32) ### Epoch info #### epoch_loss = tot_loss/len(dataloader.dataset) print('train loss: {:.4f}'.format(epoch_loss)) epoch_acc = correct/len(dataloader.dataset) print('train acc: {:.4f}'.format(epoch_acc)) if epoch==num_epochs: state = { 'model': self.model.state_dict(), 'optimizer':self.optimizer.state_dict(), 'epoch': epoch, 'train_loss':epoch_loss, 'train_acc':epoch_acc, } save_path="/content/drive/My Drive/colab notebooks/output/" torch.save(state,save_path+"/resnet18_final"+".t7") def _iteration_val(self,dataloader,epoch,num_epochs): total_step=len(dataloader) tot_loss = 0.0 correct = 0 for i ,(images, labels) in enumerate(dataloader): images = images.cuda() labels = labels.cuda() # Forward pass outputs = self.model(images) _, preds = torch.max(outputs.data,1) loss = self.criterion(outputs, labels) tot_loss += loss.data correct += torch.sum(preds == labels.data).to(torch.float32) if (i+1) % 2 == 0: print('Epoch: [{}/{}], Step: [{}/{}], Loss: {:.4f}' .format(1, 1, i+1, total_step, loss.item())) ### Epoch info #### epoch_loss = tot_loss/len(dataloader.dataset) print('val loss: {:.4f}'.format(epoch_loss)) epoch_acc = correct/len(dataloader.dataset) print('val acc: {:.4f}'.format(epoch_acc)) def _iteration_test(self,dataloader,epoch,num_epochs): total_step=len(dataloader) tot_loss = 0.0 correct = 0 for i ,(images, labels) in enumerate(dataloader): images = images.cuda() labels = labels.cuda() # Forward pass outputs = self.model(images) _, preds = torch.max(outputs.data,1) loss = self.criterion(outputs, labels) tot_loss += loss.data correct += torch.sum(preds == labels.data).to(torch.float32) if (i+1) % 2 == 0: print('Epoch: [{}/{}], Step: [{}/{}], Loss: {:.4f}' .format(1, 1, i+1, total_step, loss.item())) ### Epoch info #### epoch_loss = tot_loss/len(dataloader.dataset) print('test loss: {:.4f}'.format(epoch_loss)) epoch_acc = correct/len(dataloader.dataset) print('test acc: {:.4f}'.format(epoch_acc)) if epoch_acc > self.acc1: state = { "model": self.model.state_dict(), "optimizer": self.optimizer.state_dict(), "epoch": epoch, "epoch_loss": epoch_loss, "epoch_acc": epoch_acc, "acc1": self.acc1, } save_path="/content/drive/My Drive/colab notebooks/output/" print("在第{}個epoch取得最好的測試準確率,準確率為:{}".format(epoch,epoch_acc)) torch.save(state,save_path+"/resnet18_best"+".t7") self.acc1=max(self.acc1,epoch_acc)

我們首先增加了test()和_iteration_test()用於測試。

這裡需要注意的是:

UserWarning: Detected call of `lr_scheduler.step()` before `optimizer.step()`. In PyTorch 1.1.0 and later, you should call them in the opposite order: `optimizer.step()` before `lr_scheduler.step()`. Failure to do this will result in PyTorch skipping the first value of the learning rate schedule.

也就是說:

scheduler = ... >>> for epoch in range(100): >>> train(...) >>> validate(...) >>> scheduler.step()

在pytorch1.1.0及之後,scheduler.step()這個要放在最後面了。我們定義了一個獲取學習率的函數,在每一個epoch的時候打印學習率。我們同時要存儲訓練的最後一個epoch的模型,方便我們繼續訓練。存儲測試準確率最高的模型,方便我們使用。

最終結果如下,省略了其中的每一個step:

訓練集有: 18255 測試集有: 4750 epoch:1,lr:0.1 train loss: 0.0086 train acc: 0.5235 test loss: 0.0055 test acc: 0.5402 在第1個epoch取得最好的測試準確率,準確率為:0.5402105450630188 epoch:2,lr:0.1 train loss: 0.0054 train acc: 0.5562 test loss: 0.0055 test acc: 0.5478 在第2個epoch取得最好的測試準確率,準確率為:0.547789454460144 epoch:3,lr:0.010000000000000002 train loss: 0.0052 train acc: 0.6098 test loss: 0.0053 test acc: 0.6198 在第3個epoch取得最好的測試準確率,準確率為:0.6197894811630249 epoch:4,lr:0.010000000000000002 train loss: 0.0051 train acc: 0.6150 test loss: 0.0051 test acc: 0.6291 在第4個epoch取得最好的測試準確率,準確率為:0.6290526390075684 train loss: 0.0051 train acc: 0.6222 test loss: 0.0052 test acc: 0.6257 epoch:6,lr:0.0010000000000000002 train loss: 0.0051 train acc: 0.6224 test loss: 0.0052 test acc: 0.6295 在第6個epoch取得最好的測試準確率,準確率為:0.6294736862182617

很神奇,lr最後面居然不是0。對lr和準確率輸出時可指定輸出小數點後?位:{:.?f}

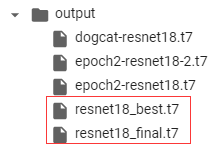

最後看下保存的模型:

的確是都有的。

下一節:可視化訓練和測試過程。