Docker 與 K8S學習筆記(二十三)—— Kubernetes集群搭建

小夥伴們,好久不見,這幾個月實在太忙,所以一直沒有更新,今天剛好有空,咱們繼續k8s的學習,由於我們後面需要深入學習Pod的調度,所以我們原先使用MiniKube搭建的實驗環境就不能滿足我們的需求了,我們這一節將使用kubeadm搭建Kubernets集群。

一、虛擬機創建

我們的集群包含三個節點kubevm1、kubevm2、kubevm3,其中kubevm1作為Master

我們首先需要使用Virtualbox創建一個虛擬機,步驟如下:

1、新建虛擬機

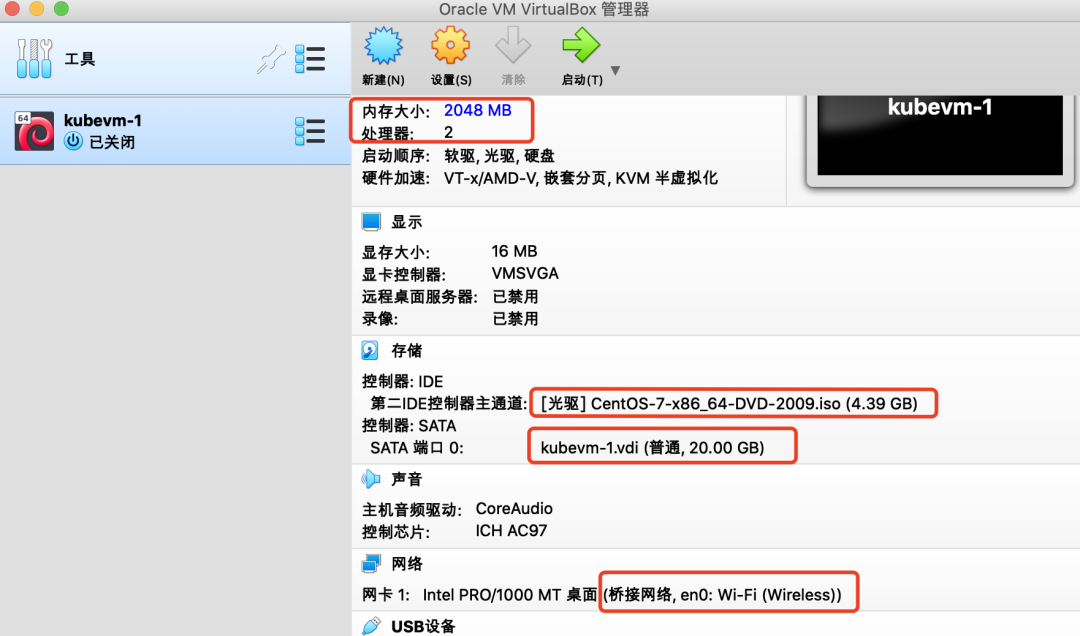

我們設置內存2G,硬盤20G,CPU 2核,在光驅設置中選擇已經下好的Centos鏡像。

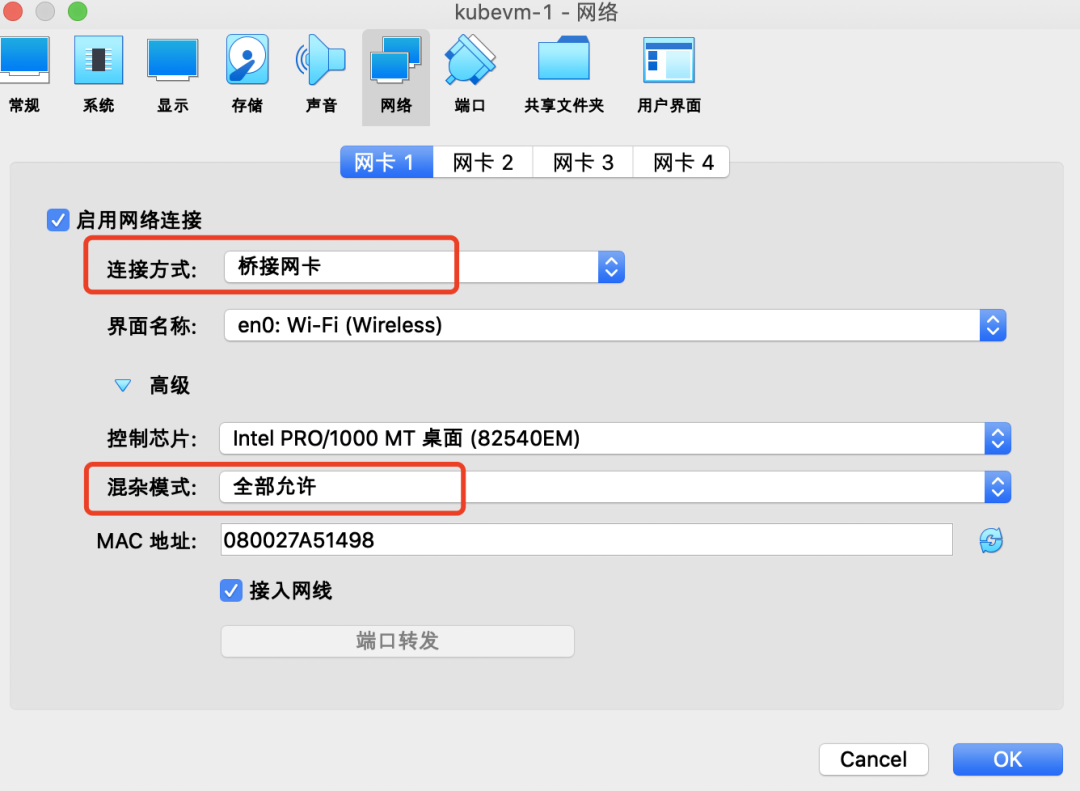

設置網絡為【橋接網卡】

2、安裝系統

啟動虛擬機,進入安裝界面,根據安裝嚮導:

-

設置時區;

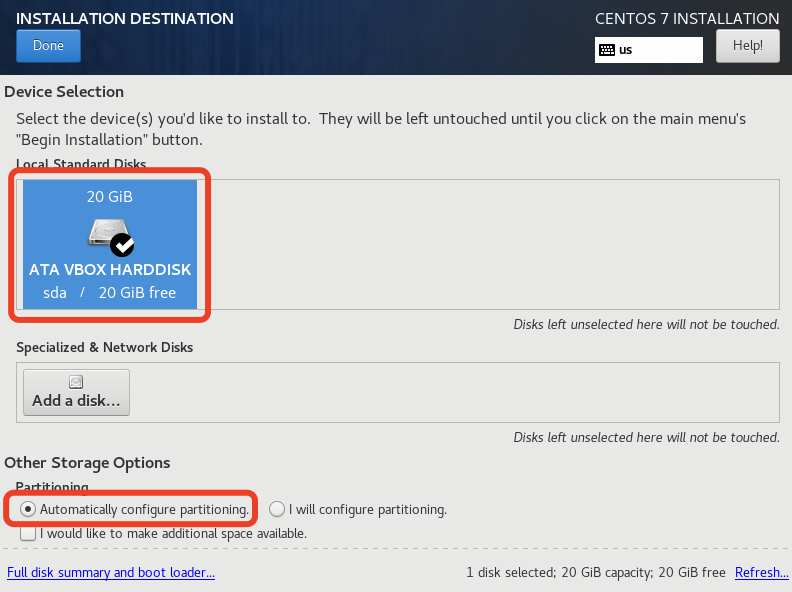

- 選擇安裝磁盤並分區(直接自動分區即可);

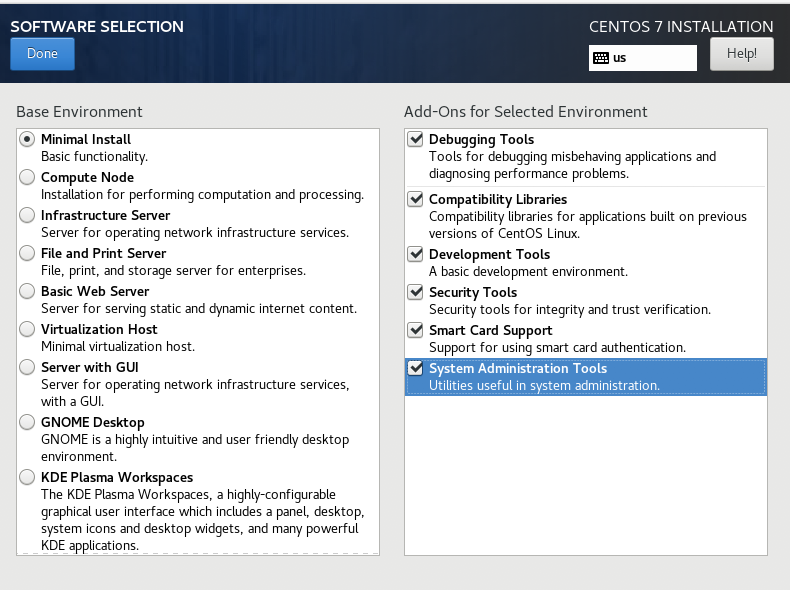

- 安裝模式選【Minimal Install】,附加軟件全選;

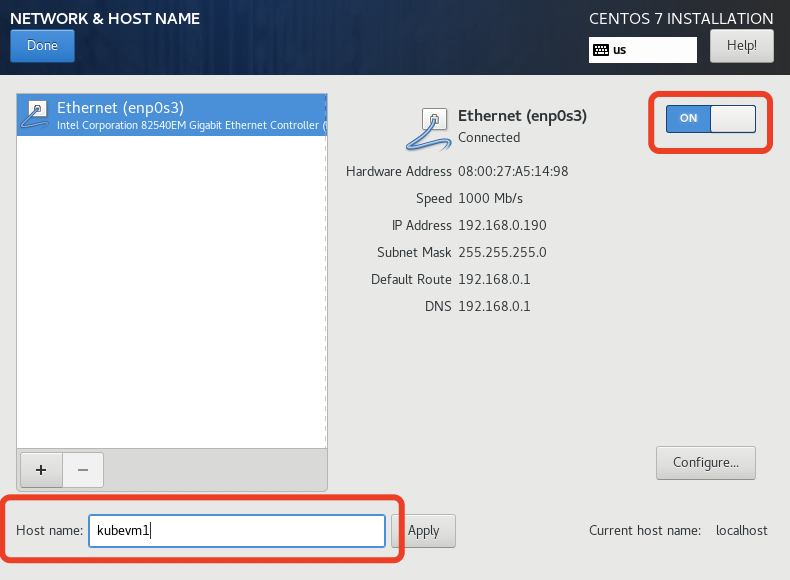

- “NETWORK & HOST NAME”中打開OnBoot,主機名如果不在這裡設置,在安裝完系統後可以通過「hostnamectl」命令設置;

- 安裝過程中可以設置root用戶密碼或者添加新用戶,我們這裡圖省事就直接用root賬戶了。

等待系統安裝完畢後,重起虛擬機,為了操作方便,我們使用宿主機的終端ssh到虛擬機。

PS:由於是最小化安裝,是沒有ifconfig命令的,所以我們可以通過ip addr獲取到虛擬機IP,然後再通過ssh登陸。

3、系統設置

1)禁用SELinux

-

臨時關閉:命令行執行 setenforce 0

-

永久關閉:修改/etc/selinux/config文件,將SELINUX=enforcing改為SELINUX=disabled

2)關閉防火牆

systemctl disable firewalld && systemctl stop firewalld

3)關閉交換分區

swapoff -a && sed -i '/ swap / s/^/#/' /etc/fstab

4)更改iptables設置

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

PS:如果提示找不到bridge-nf-call-iptables,可執行一下命令:

modprobe br_netfilter

二、安裝Docker

yum install docker -y

三、安裝Kubernetes

1、設置yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64 enabled=1 gpgcheck=0 repo_gpgcheck=0 gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg //mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg EOF

2、添加docker鏡像

vim /etc/docker/daemon.json 添加如下內容: "registry-mirrors": ["//registry.docker-cn.co"]

3、安裝kubectl、kubeadm、kubelet

yum install -y kubelet-1.19.16 kubeadm-1.19.16 kubectl-1.19.16

4、啟動docker和kubelet

systemctl enable docker && systemctl start docker

systemctl enable kubelet && systemctl start kubelet

四、Kubernetes集群安裝與配置

1、複製虛擬機並配置hosts

我們首先複製出兩個虛擬機,並分別修改其hostname為kubevm2和kubevm3。

hostnamectl set-hostnam xxx

將三台虛擬機的地址寫入到宿主機和每一台虛擬機的hosts文件中:

vim /etc/hosts 192.168.0.187 kubevm1 192.168.0.185 kubevm2 192.168.0.184 kubevm3

2、初始化master(kubevm1)

在kubevm1上執行kubeadm init

[root@kubevm1 ~]# kubeadm init --apiserver-advertise-address=192.168.56.120 --image-repository=registry.aliyuncs.com/google_containers --kubernetes-version=v1.19.16 --service-cidr=10.1.0.0/16 --pod-network-cidr=10.244.0.0/16 W0518 23:27:14.470037 2551 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io] [init] Using Kubernetes version: v1.19.16 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull' [certs] Using certificateDir folder "/etc/kubernetes/pki" [certs] Generating "ca" certificate and key [certs] Generating "apiserver" certificate and key [certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local kubevm1] and IPs [10.1.0.1 192.168.56.120] [certs] Generating "apiserver-kubelet-client" certificate and key [certs] Generating "front-proxy-ca" certificate and key [certs] Generating "front-proxy-client" certificate and key [certs] Generating "etcd/ca" certificate and key [certs] Generating "etcd/server" certificate and key [certs] etcd/server serving cert is signed for DNS names [kubevm1 localhost] and IPs [192.168.56.120 127.0.0.1 ::1] [certs] Generating "etcd/peer" certificate and key [certs] etcd/peer serving cert is signed for DNS names [kubevm1 localhost] and IPs [192.168.56.120 127.0.0.1 ::1] [certs] Generating "etcd/healthcheck-client" certificate and key [certs] Generating "apiserver-etcd-client" certificate and key [certs] Generating "sa" key and public key [kubeconfig] Using kubeconfig folder "/etc/kubernetes" [kubeconfig] Writing "admin.conf" kubeconfig file [kubeconfig] Writing "kubelet.conf" kubeconfig file [kubeconfig] Writing "controller-manager.conf" kubeconfig file [kubeconfig] Writing "scheduler.conf" kubeconfig file [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env" [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml" [kubelet-start] Starting the kubelet [control-plane] Using manifest folder "/etc/kubernetes/manifests" [control-plane] Creating static Pod manifest for "kube-apiserver" [control-plane] Creating static Pod manifest for "kube-controller-manager" [control-plane] Creating static Pod manifest for "kube-scheduler" [etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests" [wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s [kubelet-check] Initial timeout of 40s passed. [apiclient] All control plane components are healthy after 43.002951 seconds [upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [kubelet] Creating a ConfigMap "kubelet-config-1.19" in namespace kube-system with the configuration for the kubelets in the cluster [upload-certs] Skipping phase. Please see --upload-certs [mark-control-plane] Marking the node kubevm1 as control-plane by adding the label "node-role.kubernetes.io/master=''" [mark-control-plane] Marking the node kubevm1 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule] [bootstrap-token] Using token: wribbh.31c6e1tnddpnpwn9 [bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes [bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key [addons] Applied essential addon: CoreDNS [addons] Applied essential addon: kube-proxy Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ Then you can join any number of worker nodes by running the following on each as root: kubeadm join 192.168.56.120:6443 --token wribbh.31c6e1tnddpnpwn9 \ --discovery-token-ca-cert-hash sha256:1804e7ee43d7469839b3f5fdbf2c57f5d53eee1da6bc40c59a1b04fce6edddd5

接下來我們執行以下命令,這樣我們就可以使用kubectl管理集群了:

mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config

我們先查看下當前集群各個組件狀態:

[root@kubevm1 ~]# kubectl get pods,svc -n kube-system NAME READY STATUS RESTARTS AGE pod/coredns-6d56c8448f-jf9gg 0/1 Pending 0 2m4s pod/coredns-6d56c8448f-m2cdp 0/1 Pending 0 2m3s pod/etcd-kubevm1 1/1 Running 0 2m17s pod/kube-apiserver-kubevm1 1/1 Running 0 2m17s pod/kube-controller-manager-kubevm1 1/1 Running 0 2m17s pod/kube-proxy-rv7g4 1/1 Running 0 2m4s pod/kube-scheduler-kubevm1 1/1 Running 0 2m17s NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kube-dns ClusterIP 10.1.0.10 <none> 53/UDP,53/TCP,9153/TCP 2m19s

我們發現coredns都處於NotReady狀態,這是因為我們還沒有安裝網絡組件。

3、安裝網絡插件

下載fannel的yaml配置文件:

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

這裡確認下yaml中Network是否與前面執行kubeadm init時–pod-network-cidr參數的值一致。

kubectl apply -f kube-flannel.yml

等待一會兒,我們可以看到集群中網絡服務對應的Pod都ok了:

[root@kubevm1 ~]# kubectl get pods,svc -n kube-system NAME READY STATUS RESTARTS AGE pod/coredns-6d56c8448f-jf9gg 1/1 Running 0 28m pod/coredns-6d56c8448f-m2cdp 1/1 Running 0 28m pod/etcd-kubevm1 1/1 Running 0 28m pod/kube-apiserver-kubevm1 1/1 Running 0 28m pod/kube-controller-manager-kubevm1 1/1 Running 0 28m pod/kube-flannel-ds-td89l 1/1 Running 0 13m pod/kube-proxy-rv7g4 1/1 Running 0 28m pod/kube-scheduler-kubevm1 1/1 Running 0 28m NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE service/kube-dns ClusterIP 10.1.0.10 <none> 53/UDP,53/TCP,9153/TCP 28m

4、註冊Node

在kubevm2、kubevm3中執行以下命令,註冊到master:

kubeadm join 192.168.56.120:6443 --token wribbh.31c6e1tnddpnpwn9 \ --discovery-token-ca-cert-hash sha256:1804e7ee43d7469839b3f5fdbf2c57f5d53eee1da6bc40c59a1b04fce6edddd5

註冊後我們使用kubectl get node查看一下:

[root@kubevm1 ~]# kubectl get nodes NAME STATUS ROLES AGE VERSION kubevm1 Ready master 46m v1.19.16 kubevm2 Ready <none> 15m v1.19.16 kubevm3 Ready <none> 14m v1.19.16

ok,至此整個集群搭建完成。