從零搭建K8S測試集群

環境準備

本文介紹從零開始創建多個虛擬機,組建一個測試k8s集群的完整過程,並記錄中間踩過的坑

創建虛擬機

安裝vagrant和virtualbox

創建兩個目錄(一個目錄對應一個虛擬機),進入執行vagrant init centos/7初始化,以及vagrant up,然後去吃飯,等待虛擬機裝好

D:\vm2>vagrant init centos/7

A `Vagrantfile` has been placed in this directory. You are now

ready to `vagrant up` your first virtual environment! Please read

the comments in the Vagrantfile as well as documentation on

`vagrantup.com` for more information on using Vagrant.

D:\vm2>vagrant up

Bringing machine 'default' up with 'virtualbox' provider...

==> default: Importing base box 'centos/7'...

==> default: Matching MAC address for NAT networking...

==> default: Checking if box 'centos/7' version '2004.01' is up to date...

==> default: Setting the name of the VM: vm2_default_1608174748422_96033

==> default: Fixed port collision for 22 => 2222. Now on port 2200.

==> default: Clearing any previously set network interfaces...

==> default: Preparing network interfaces based on configuration...

default: Adapter 1: nat

==> default: Forwarding ports...

default: 22 (guest) => 2200 (host) (adapter 1)

==> default: Booting VM...

==> default: Waiting for machine to boot. This may take a few minutes...

default: SSH address: 127.0.0.1:2200

default: SSH username: vagrant

default: SSH auth method: private key

default:

default: Vagrant insecure key detected. Vagrant will automatically replace

default: this with a newly generated keypair for better security.

default:

default: Inserting generated public key within guest...

default: Removing insecure key from the guest if it's present...

default: Key inserted! Disconnecting and reconnecting using new SSH key...

==> default: Machine booted and ready!

==> default: Checking for guest additions in VM...

default: No guest additions were detected on the base box for this VM! Guest

default: additions are required for forwarded ports, shared folders, host only

default: networking, and more. If SSH fails on this machine, please install

default: the guest additions and repackage the box to continue.

default:

default: This is not an error message; everything may continue to work properly,

default: in which case you may ignore this message.

==> default: Rsyncing folder: /cygdrive/d/vm2/ => /vagrant

vagrant會幫我們把虛擬機裝好並啟動,創建一個vagrant賬號,密碼vagrant,root賬號的密碼也是vagrant。同時,在當前的目錄下生成一個Vagrantfile文件,我們需要對這個文件做一點小小的修改來保證虛擬機的設置滿足k8s需求,以及虛擬機之間的網絡可以互通。

# 配置一個公共網絡(bridge網絡,可以指定ip,也可以不指定,使用默認的dhcp分配地址)

config.vm.network "public_network", ip: "192.168.56.10"

config.vm.provider "virtualbox" do |vb|

# Display the VirtualBox GUI when booting the machine

# vb.gui = true

# 指定內存和cpu核數

# Customize the amount of memory on the VM:

vb.memory = "4096"

vb.cpus = 2

end

修改好後執行vagrant reload即可重啟虛擬機,讓配置生效

bridge網絡默認虛擬機可以ping其它虛擬機,但宿主機無法ping通虛擬機,如果指定了和宿主機同一個網段(需要確認指定的ip是空閑的),宿主機可以ping通虛擬機,但虛擬機無法ping通宿主機,包括默認的dhcp無法為虛擬機分配ip,這應該和公司的網絡有關,如果這台虛擬機想要訪問其它機器,應該需要先入域才有權限訪問!

關於虛擬機的網絡

這裡介紹一下虛擬機幾種主要的網絡模型:

- NAT(Network Address Translatation)

- 橋接(Bridge)

- 主機(Host-only)

- 內部(Internal)

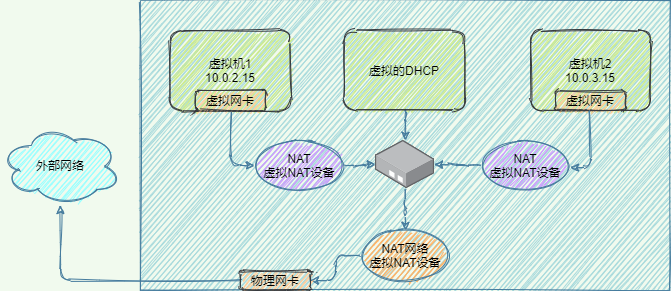

NAT

Nat是Vagrant默認設置的網絡模型,虛擬機與物理機並不在同一個網段,虛擬機可以訪問外網,訪問時需要用NAT虛擬設備進行地址轉換,嚴格來講NAT有2種實現方式:

- NAT:NAT上的虛擬機互相隔離,彼此不能通信,如下圖所示,每個虛擬機的虛擬網卡連接着一個虛擬NAT設備(圖中紫色NAT,沒有橙色的NAT)

- NAT網絡:NAT網絡上的虛擬機可以互通,共享虛擬NAT設備(圖中橙色NAT,沒有紫色NAT)

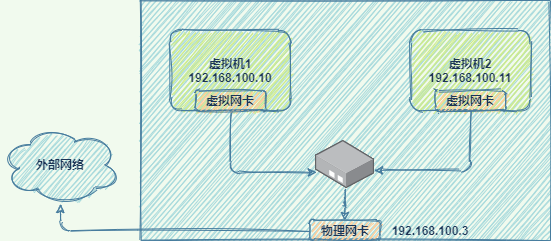

橋接網絡

橋接網絡簡單方便,所有虛擬機和宿主機都在同一個網絡中,與宿主機組網的其它機器也可以像訪問宿主機一樣訪問虛擬機,如同一個真實的網絡設備一樣,是功能最完整的一種網絡模型,但缺點是如果虛擬機過多,廣播的成本很高

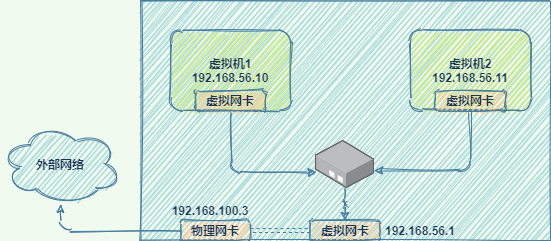

Host-only

主機網絡將網絡環境限制在主機內部,默認不能訪問外網,主機和虛擬機不在同一個網段,但主機與虛擬機之間、虛擬機和虛擬機之間是互通的(通過配置也可以實現對外網的訪問)。在主機上設置物理網卡的屬性/共享,將物理網卡和虛擬網卡橋接或共享即可訪問外網。

內部網絡

這是一種簡單的網絡模型,虛擬機和外部環境完全斷開,只允許虛擬機之間互相訪問,用的比較少

小結

| Model | VM -> host | host -> VM | VM <-> VM | VM -> Internet | Internet -> VM |

|---|---|---|---|---|---|

| Bridged | + | + | + | + | + |

| NAT | + | Port Forwarding | – | + | Port Forwarding |

| NAT Network | + | Port Forwarding | + | + | Port Forwarding |

| Host-only | + | + | + | – | – |

| Internal | – | – | + | – | – |

關於vagrant的網絡

vagrant支持3種網絡配置,可以在Vagrantfile中進行配置:

- 端口映射,比如訪問本機的8080端口、轉發到虛擬機的80端口(默認為tcp,如果需要轉發udp則指定Protocol為udp)

config.vm.network "forwarded_port", guest: 80, host: 8080

- 私有網絡,對應Host-only網絡,允許主機訪問虛擬機,以及虛擬機之間互相訪問,其它機器無法訪問虛擬機,安全性高

config.vm.network "private_network", ip: "192.168.21.4"

- 共有網絡,對應bridge網絡,相當於一個獨立的網絡設備

config.vm.network "public_network", ip: "192.168.1.120"

docker安裝

docker官方文檔 //docs.docker.com/engine/install/

- 設置官方的軟件源

$ sudo yum install -y yum-utils

$ sudo yum-config-manager \

--add-repo \

//download.docker.com/linux/centos/docker-ce.repo

- 安裝docker引擎

$ sudo yum install docker-ce docker-ce-cli containerd.io

- 啟動docker

$ sudo systemctl start docker

k8s安裝

k8s官方文檔 //kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/

- 確保 iptables 工具不使用 nftables 後端

update-alternatives --set iptables /usr/sbin/iptables-legacy

update-alternatives --set ip6tables /usr/sbin/ip6tables-legacy

update-alternatives --set arptables /usr/sbin/arptables-legacy

update-alternatives --set ebtables /usr/sbin/ebtables-legacy

- 設置源,並安裝 kubelet kubeadm kubectl

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=//packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=//packages.cloud.google.com/yum/doc/yum-key.gpg //packages.cloud.google.com/yum/doc/rpm-package-key.gpg

EOF

# 將 SELinux 設置為 permissive 模式(相當於將其禁用)

setenforce 0

sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

yum install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

systemctl enable --now kubelet

初始化集群

kubeadm init

在主節點上執行kubeadm初始化

[root@localhost vagrant]# kubeadm init

[init] Using Kubernetes version: v1.20.0

[preflight] Running pre-flight checks

[WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service'

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at //kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.1. Latest validated version: 19.03

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR NumCPU]: the number of available CPUs 1 is less than the required 2

[ERROR Mem]: the system RAM (486 MB) is less than the minimum 1700 MB

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables contents are not set to 1

[ERROR Swap]: running with swap on is not supported. Please disable swap

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

- docker service is not enabled的告警,直接執行

systemctl enable docker.service解決,docker會被設置為開機自啟動 - cgroupfs 問題告警,意思是systemd作為cgroup驅動更加穩定,讓你用這個,不同的cri的設置可以參考

//kubernetes.io/docs/setup/cri/ - docker版本問題告警,我的docker版本過新了,官方還沒有測試過,最後一個驗證過的版本是19.03

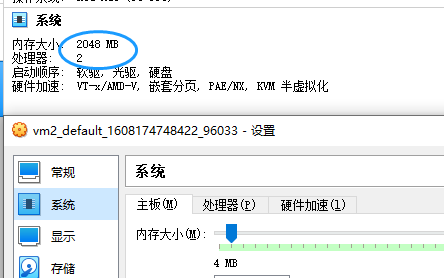

- Error部分CPU和內存不足的問題,打開VirtualBox,在虛擬機的設置中將CPU的核數調整為2或以上、內存大小調整為1700MB或以上即可

- FileContent–proc-sys-net-bridge-bridge-nf-call-iptables問題,iptable被繞過而導致流量無法正確路由,執行下面的命令解決

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

- Swap問題,執行

sudo swapoff -a禁用swap即可,但每次重啟都需要重新設置,可以修改/etc/fstab目錄下,注釋掉swap那行即可

vagrant網絡設置

vagrant默認使用nat網絡,雖然在虛擬機中可以訪問主機和外網,但多個虛擬機之間無法互相訪問,

加入集群

我們在主節點上執行kubeadm,注意2點:

- –apiserver-advertise-address “192.168.205.10”,指定我們的vm1的eth1網卡,這個網卡才可以和其它vm互通

- –pod-network-cidr=10.244.0.0/16 為使用flannel網絡插件做準備

[root@vm1 vagrant]# kubeadm init --apiserver-advertise-address "192.168.205.10" --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.20.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at //kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.1. Latest validated version: 19.03

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local vm1] and IPs [10.96.0.1 192.168.205.10]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost vm1] and IPs [192.168.205.10 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost vm1] and IPs [192.168.205.10 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 17.508748 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.20" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node vm1 as control-plane by adding the labels "node-role.kubernetes.io/master=''" and "node-role.kubernetes.io/control-plane='' (deprecated)"

[mark-control-plane] Marking the node vm1 as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: 2ckaow.r0ed8bpcy7sdx9kj

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

#1.1 特別注意!在開始使用之前,請執行下面這段命令

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

#1.2 或者執行下面這一行(如果你是root用戶的話)

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

#2 接下來需要部署一個pod網絡到集群中

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

//kubernetes.io/docs/concepts/cluster-administration/addons/

#3 然後執行下面的命令將worker節點加入集群

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 192.168.205.10:6443 --token paattq.r3qp8kksjl0yukls \

--discovery-token-ca-cert-hash sha256:f18d1e87c8b1d041bc7558eedd2857c2ad7094b1b2c6aa8388d0ef51060e4c0f

配置kubeconfig

按照kubeadm的提示,需要先執行下面這段代碼配置kubeconfig才可以正常訪問到集群

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

否則會出現以下問題,執行完上面的方法後再執行kubectl get nodes就正常了

[root@vm1 vagrant]# kubectl get nodes

The connection to the server localhost:8080 was refused - did you specify the right host or port?

或者:

[root@vm1 vagrant]# kubectl get nodes

Unable to connect to the server: x509: certificate signed by unknown authority (possibly because of "crypto/rsa: verification error" while trying to verify candidate authority certificate "kubernetes")

此時查看以下k8s內部pod的運行狀態,可以發現,只有kube-proxy和kube-apiserver處於就緒狀態

[root@vm1 vagrant]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-74ff55c5b-4ndtt 0/1 ContainerCreating 0 43s

coredns-74ff55c5b-tmc7n 0/1 ContainerCreating 0 43s

etcd-vm1 0/1 Running 0 51s

kube-apiserver-vm1 1/1 Running 0 51s

kube-controller-manager-vm1 0/1 Running 0 51s

kube-proxy-5mvwf 1/1 Running 0 44s

kube-scheduler-vm1 0/1 Running 0 51s

部署pod網絡

接下來需要部署Pod網絡,否則我們觀察到的節點會是NotReady的狀態,如下所示

[root@vm1 vagrant]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

vm1 NotReady control-plane,master 4m v1.20.0

具體使用哪一個網絡,可以參考//kubernetes.io/docs/concepts/cluster-administration/addons/,這裡選擇了大名鼎鼎的flannel插件,在使用kubeadm時,flannel要求我們在kubeadm init時指定--pod-network-cidr參數來初始化cidr。

NOTE: If kubeadm is used, then pass --pod-network-cidr=10.244.0.0/16 to kubeadm init to ensure that the podCIDR is set.

另外,由於這裡我們使用的是vagrant創建的虛擬機,默認的eth0網卡是一個nat網卡,只能從虛擬機訪問外部,不能從外部訪問虛擬機內部,所以我們需要指定一個可以和外部通信的bridge網卡——eth1,修改kube-flannel.yml,添加--iface=eth1參數指定網卡。

containers:

- name: kube-flannel

image: quay.io/coreos/flannel:v0.13.1-rc1

command:

- /opt/bin/flanneld

args:

- --ip-masq

- --kube-subnet-mgr

- --iface=eth1 # 為kube-flannel容器指定額外的啟動參數!

執行下面的命令,可以創建flannel網絡

wget //raw.githubusercontent.com/coreos/flannel/master/Documentation/k8s-manifests/kube-flannel.yml

kubectl apply -f kube-flannel.yml

部署完網絡後,再過一會兒查看k8s的pod狀態可以發現,所有的pod都就緒了,並且啟動了一個新的kube-flannel-ds

[root@vm1 vagrant]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-74ff55c5b-4ndtt 1/1 Running 0 88s

coredns-74ff55c5b-tmc7n 1/1 Running 0 88s

etcd-vm1 1/1 Running 0 96s

kube-apiserver-vm1 1/1 Running 0 96s

kube-controller-manager-vm1 1/1 Running 0 96s

kube-flannel-ds-dnw4d 1/1 Running 0 19s

kube-proxy-5mvwf 1/1 Running 0 89s

kube-scheduler-vm1 1/1 Running 0 96s

加入集群

最後在vm2上執行kubeadm join加入集群

[root@vm2 vagrant]# kubeadm join 192.168.205.10:6443 --token paattq.r3qp8kksjl0yukls \

> --discovery-token-ca-cert-hash sha256:f18d1e87c8b1d041bc7558eedd2857c2ad7094b1b2c6aa8388d0ef51060e4c0f

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at //kubernetes.io/docs/setup/cri/

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.1. Latest validated version: 19.03

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

加入之後,運行ip a可以查看到我們的網絡,k8s為我們額外啟動了flannel.1、cni0、以及veth設備

[root@vm2 vagrant]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 52:54:00:4d:77:d3 brd ff:ff:ff:ff:ff:ff

inet 10.0.2.15/24 brd 10.0.2.255 scope global noprefixroute dynamic eth0

valid_lft 85901sec preferred_lft 85901sec

inet6 fe80::5054:ff:fe4d:77d3/64 scope link

valid_lft forever preferred_lft forever

3: eth1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 08:00:27:f1:20:55 brd ff:ff:ff:ff:ff:ff

inet 192.168.205.11/24 brd 192.168.205.255 scope global noprefixroute eth1

valid_lft forever preferred_lft forever

inet6 fe80::a00:27ff:fef1:2055/64 scope link

valid_lft forever preferred_lft forever

4: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:6f:03:4a:68 brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

5: flannel.1: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UNKNOWN group default

link/ether 6e:99:9d:7b:08:ec brd ff:ff:ff:ff:ff:ff

inet 10.244.1.0/32 brd 10.244.1.0 scope global flannel.1

valid_lft forever preferred_lft forever

inet6 fe80::6c99:9dff:fe7b:8ec/64 scope link

valid_lft forever preferred_lft forever

6: cni0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue state UP group default qlen 1000

link/ether 4a:fe:72:33:fc:85 brd ff:ff:ff:ff:ff:ff

inet 10.244.1.1/24 brd 10.244.1.255 scope global cni0

valid_lft forever preferred_lft forever

inet6 fe80::48fe:72ff:fe33:fc85/64 scope link

valid_lft forever preferred_lft forever

7: veth7981bae1@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master cni0 state UP group default

link/ether 1a:87:b6:82:c7:5c brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet6 fe80::1887:b6ff:fe82:c75c/64 scope link

valid_lft forever preferred_lft forever

8: veth54bbbfd5@if3: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1450 qdisc noqueue master cni0 state UP group default

link/ether 46:36:5d:96:a0:69 brd ff:ff:ff:ff:ff:ff link-netnsid 1

inet6 fe80::4436:5dff:fe96:a069/64 scope link

valid_lft forever preferred_lft forever

此時再在vm1上查看節點狀態,可以看到有2個就緒的節點

[root@vm1 vagrant]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

vm1 Ready control-plane,master 5h58m v1.20.0

vm2 Ready <none> 5h54m v1.20.0

部署一個服務

現在我們有了一個簡單的k8s環境,來部署一個簡單的nginx服務測試一下吧,首先準備一個nginx-deployment.yaml文件,內容如下

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

labels:

app: nginx

spec:

replicas: 2

selector:

matchLabels:

app: nginx

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:latest

ports:

- containerPort: 80

執行kubectl apply進行部署

[root@vm1 vagrant]# kubectl apply -f nginx-deployment.yaml

deployment.apps/nginx-deployment created

可以查看一下Pod有沒有起來

[root@vm1 vagrant]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-585449566-bqls4 1/1 Running 0 20s

nginx-deployment-585449566-n8ssk 1/1 Running 0 20s

再執行kubectl expose添加一個service導出

[root@vm1 vagrant]# kubectl expose deployment nginx-deployment --port=80 --type=NodePort

service/nginx-deployment exposed

kubectl get svc查看一下映射的端口

[root@vm1 vagrant]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 4m19s

nginx-deployment NodePort 10.98.176.208 <none> 80:32033/TCP 8s

指定導出的端口用curl進行測試

[root@vm1 vagrant]# curl -I 192.168.205.11:32033

HTTP/1.1 200 OK

Server: nginx/1.19.6

Date: Mon, 21 Dec 2020 07:09:40 GMT

Content-Type: text/html

Content-Length: 612

Last-Modified: Tue, 15 Dec 2020 13:59:38 GMT

Connection: keep-alive

ETag: "5fd8c14a-264"

Accept-Ranges: bytes

參考

- //github.com/rootsongjc/kubernetes-vagrant-centos-cluster/blob/master/README-cn.md

- //my.oschina.net/xuthus/blog/3131077

- //my.oschina.net/u/3683692/blog/3025611

- //cloud.tencent.com/developer/article/1460094

- //www.kubernetes.org.cn/5551.html

- //cloud.tencent.com/developer/article/1432433