搭建Android+QT+OpenCV环境,实现“单色图片着色”效果

- 2019 年 10 月 3 日

- 筆記

ANDROID_OPENCV = D:/OpenCV–android–sdk/sdk/native

INCLUDEPATH +=

$$ANDROID_OPENCV/jni/include/opencv2

$$ANDROID_OPENCV/jni/include

LIBS +=

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_ml.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_objdetect.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_calib3d.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_video.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_features2d.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_highgui.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_flann.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_imgproc.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_dnn.a

$$ANDROID_OPENCV/staticlibs/armeabi–v7a/libopencv_core.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/libcpufeatures.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/libIlmImf.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibjasper.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibjpeg–turbo.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibpng.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibprotobuf.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibtiff.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/liblibwebp.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/libquirc.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/libtbb.a

$$ANDROID_OPENCV/3rdparty/libs/armeabi–v7a/libtegra_hal.a

$$ANDROID_OPENCV/libs/armeabi–v7a/libopencv_java4.so

}

ANDROID_EXTRA_LIBS =

D:/OpenCV–android–sdk/sdk/native/libs/armeabi–v7a/libopencv_java4.so

data.files += dnn/*.prototxt

data.files += dnn/*.caffemodel

data.path = /assets/dnn

INSTALLS += data

data.files += dnn/*.prototxt

data.files += dnn/*.caffemodel

data.path = /assets/dnn

INSTALLS += data

{

QFile::copy(“assets:/dnn/lena.bmp”, “lena.bmp”);

Mat src = imread(“lena.bmp”);

cvtColor(src,src,COLOR_BGR2GRAY);

QPixmap qpixmap = Mat2QImage(src);

// 将图片显示到label上

ui–>label–>setPixmap(qpixmap);

}

QPixmap Mat2QImage(Mat src)

{

QImage img;

//根据QT的显示方法进行转换

if(src.channels() == 3)

{

cvtColor( src, tmp, COLOR_BGR2RGB );

img = QImage( (const unsigned char*)(tmp.data), tmp.cols, tmp.rows, QImage::Format_RGB888 );

}

else

{

img = QImage( (const unsigned char*)(src.data), src.cols, src.rows, QImage::Format_Grayscale8 );

}

QPixmap qimg = QPixmap::fromImage(img) ;

return qimg;

}

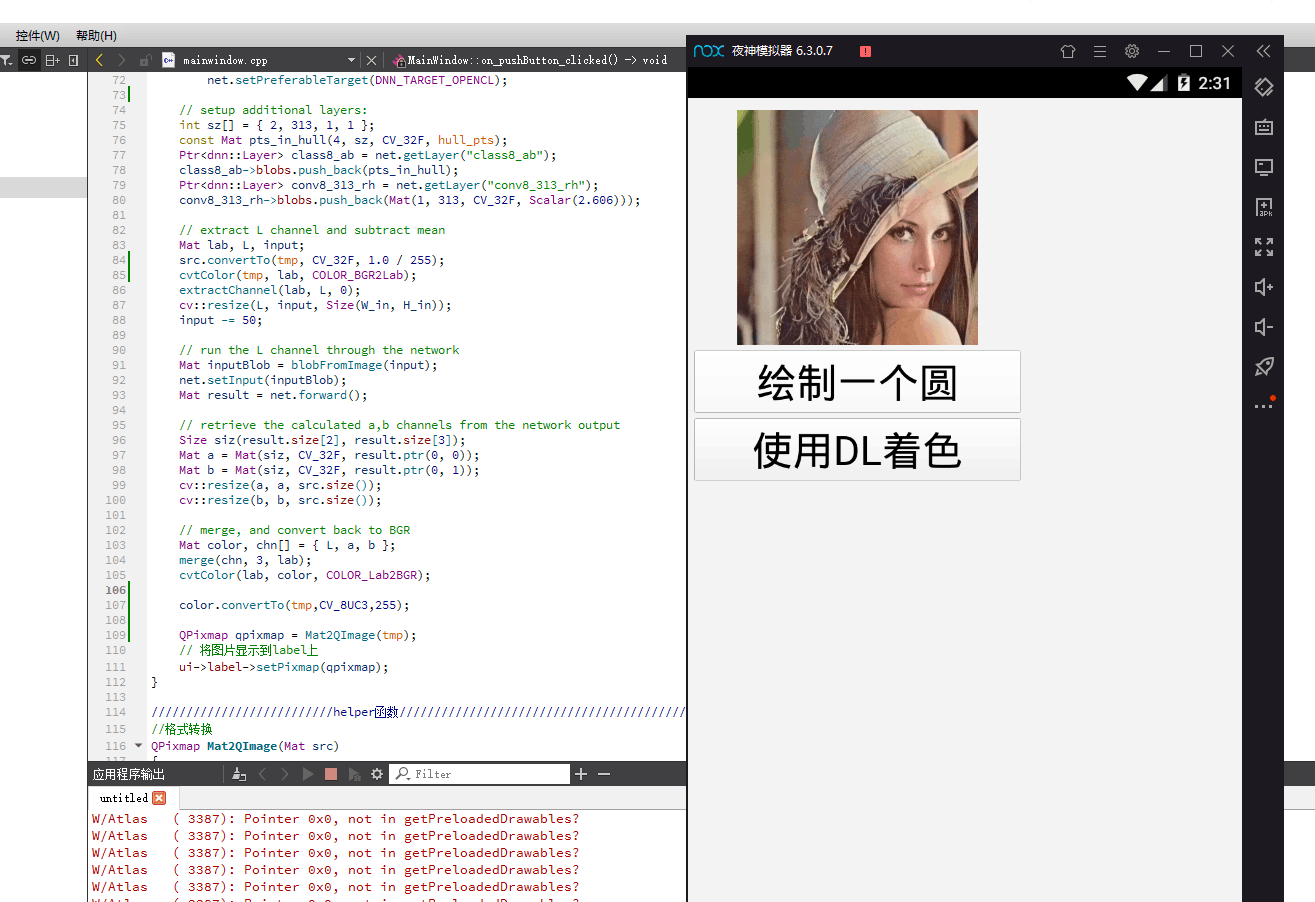

{

QFile::copy(“assets:/dnn/lena.jpg”, “lena.jpg”);

QFile::copy(“assets:/dnn/colorization_deploy_v2.prototxt”, “colorization_deploy_v2.prototxt”);

QFile::copy(“assets:/dnn/colorization_release_v2.caffemodel”, “colorization_release_v2.caffemodel”);

Mat src = imread(“lena.jpg”);

cvtColor(src,tmp,COLOR_BGR2GRAY);

cvtColor(tmp,src,COLOR_GRAY2BGR);

string modelTxt = “colorization_deploy_v2.prototxt”;

string modelBin = “colorization_release_v2.caffemodel”;

bool useOpenCL = true;

// fixed input size for the pretrained network

const int W_in = 224;

const int H_in = 224;

Net net = dnn::readNetFromCaffe(modelTxt, modelBin);

if (useOpenCL)

net.setPreferableTarget(DNN_TARGET_OPENCL);

// setup additional layers:

int sz[] = { 2, 313, 1, 1 };

const Mat pts_in_hull(4, sz, CV_32F, hull_pts);

Ptr<dnn::Layer> class8_ab = net.getLayer(“class8_ab”);

class8_ab–>blobs.push_back(pts_in_hull);

Ptr<dnn::Layer> conv8_313_rh = net.getLayer(“conv8_313_rh”);

conv8_313_rh–>blobs.push_back(Mat(1, 313, CV_32F, Scalar(2.606)));

// extract L channel and subtract mean

Mat lab, L, input;

src.convertTo(tmp, CV_32F, 1.0 / 255);

cvtColor(tmp, lab, COLOR_BGR2Lab);

extractChannel(lab, L, 0);

cv::resize(L, input, Size(W_in, H_in));

input -= 50;

// run the L channel through the network

Mat inputBlob = blobFromImage(input);

net.setInput(inputBlob);

Mat result = net.forward();

// retrieve the calculated a,b channels from the network output

Size siz(result.size[2], result.size[3]);

Mat a = Mat(siz, CV_32F, result.ptr(0, 0));

Mat b = Mat(siz, CV_32F, result.ptr(0, 1));

cv::resize(a, a, src.size());

cv::resize(b, b, src.size());

// merge, and convert back to BGR

Mat color, chn[] = { L, a, b };

merge(chn, 3, lab);

cvtColor(lab, color, COLOR_Lab2BGR);

color.convertTo(tmp,CV_8UC3,255);

QPixmap qpixmap = Mat2QImage(tmp);

// 将图片显示到label上

ui–>label–>setPixmap(qpixmap);

}

下载caffeemodel和prototxt文件。整个过程中很多代码,只有几行是核心的:

其他的代码都是为了能够将各种文件转换成forward支持的格式。其中调用了一个大的常量:

–90., –90., –90., –90., –90., –80., –80., –80., –80., –80., –80., –80., –80., –70., –70., –70., –70., –70., –70., –70., –70.,

–70., –70., –60., –60., –60., –60., –60., –60., –60., –60., –60., –60., –60., –60., –50., –50., –50., –50., –50., –50., –50., –50.,

–50., –50., –50., –50., –50., –50., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –40., –30.,

–30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –30., –20., –20., –20., –20., –20., –20., –20.,

–20., –20., –20., –20., –20., –20., –20., –20., –20., –10., –10., –10., –10., –10., –10., –10., –10., –10., –10., –10., –10., –10.,

–10., –10., –10., –10., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 0., 10., 10., 10., 10., 10., 10., 10.,

10., 10., 10., 10., 10., 10., 10., 10., 10., 10., 10., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20., 20.,

20., 20., 20., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 30., 40., 40., 40., 40.,

40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 40., 50., 50., 50., 50., 50., 50., 50., 50., 50., 50.,

50., 50., 50., 50., 50., 50., 50., 50., 50., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60., 60.,

60., 60., 60., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 70., 80., 80., 80.,

80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 80., 90., 90., 90., 90., 90., 90., 90., 90., 90., 90.,

90., 90., 90., 90., 90., 90., 90., 90., 90., 100., 100., 100., 100., 100., 100., 100., 100., 100., 100., 50., 60., 70., 80., 90.,

20., 30., 40., 50., 60., 70., 80., 90., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., –20., –10., 0., 10., 20., 30., 40., 50.,

60., 70., 80., 90., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., 100., –40., –30., –20., –10., 0., 10., 20.,

30., 40., 50., 60., 70., 80., 90., 100., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., 100., –50.,

–40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., 100., –60., –50., –40., –30., –20., –10., 0., 10., 20.,

30., 40., 50., 60., 70., 80., 90., 100., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90.,

100., –80., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., –80., –70., –60., –50.,

–40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., 90., –90., –80., –70., –60., –50., –40., –30., –20., –10.,

0., 10., 20., 30., 40., 50., 60., 70., 80., 90., –100., –90., –80., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30.,

40., 50., 60., 70., 80., 90., –100., –90., –80., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70.,

80., –110., –100., –90., –80., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., –110., –100.,

–90., –80., –70., –60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., 80., –110., –100., –90., –80., –70.,

–60., –50., –40., –30., –20., –10., 0., 10., 20., 30., 40., 50., 60., 70., –110., –100., –90., –80., –70., –60., –50., –40., –30.,

–20., –10., 0., 10., 20., 30., 40., 50., 60., 70., –90., –80., –70., –60., –50., –40., –30., –20., –10., 0.

};

这些数据的产生都和作者原始采用的模型有密切关系,想要完全理解Dnn的代码就必须了解对应模型的训练过程。

如何使用”夜神“作为虚拟机来进行程序调试